Search

Items tagged with: Mastodon

Brave is charging $60 to remove features it added in the first place

Another reason to avoid using brave.

https://www.xda-developers.com/brave-is-charging-60-to-remove-features-it-added-in-the-first-place/

#news #tech #technology #socialmedia #twitter #mastodon #bluesky #security #privacy

Brave is charging $60 to remove features it added in the first place

Pay more to get less.Simon Batt (XDA)

Interessanter Trend, den @netzbegruenung hier dokumentiert.

💬 "Die Annahme, X sei weiterhin unangefochtener Marktführer bei den Kurznachrichtendiensten in Deutschland, ist überholt. Einen messbaren Bluesky-Hype gibt es in den aktuellen Zahlen weder global noch in Bezug auf Deutschland. Mastodon ist stattdessen der klare Outperformer in Deutschland."

👉 https://netzbegruenung.de/blog/das-ende-der-dominanz-microblogging-wandel-in-deutschland-in-zahlen

"Mastodon sucks." Every last bit of Fedi is built by members of your community trying to provide a space to people for free, often at great expense to themselves. This isn't some social network we just rolled up and started using, we built it. I wish more people understood that before they slam it.

🌐 Be part of Berlin Fediverse Day 2026!

Das größte Community‑Event rund um #Mastodon, #PeerTube, #Pixelfed, #Loops und andere offene Netzwerke lädt ein zu einem Wochenende voller Talks, Workshops und Austausch über das dezentrale Open Social Web.

Vom 11. bis 13. September 2026 in Berlin diskutieren Entwicklerinnen, Kreative, Journalistinnen, Forscherinnen und Aktivistinnen und mehr über digitale Selbstbestimmung, offene Standards und die Zukunft sozialer Medien jenseits von #BigTech.

Lustige Beobachtung: Wenn ich Leuten erkläre, dass es im Fediverse keinen Algorithmus gibt, der entscheidet was sie sehen, kommt erstmal ein irritiertes "Aber… woher weiß ich dann, was wichtig ist?"

Ähm. Du? Du entscheidest das? 😄

Das ist tatsächlich der größte Umgewöhnungseffekt. Nicht die Technik, nicht das Föderieren – sondern wieder selbst zu kuratieren, wem man folgt und was man lesen will.

Fühlt sich erstmal komisch an. Dann befreiend.

Mastodon, get ready: funk kommt ins Fediverse! 🎉

@mervelous und @remrow haben auf der #RP26 die News gedroppt, dass ab Juni frischer, junger Content aus dem funk-Netzwerk hierher kommt.

Freu mich drauf! 🙂 Ein weiterer Schritt, um die Attraktivität der unabhängigen Plattformen durch tollen Content zu erhöhen und so weitere Menschen auf die Plattform zu führen.

@republica #Mastodon #neuhier #funk #Fediverse #republica #ÖRR

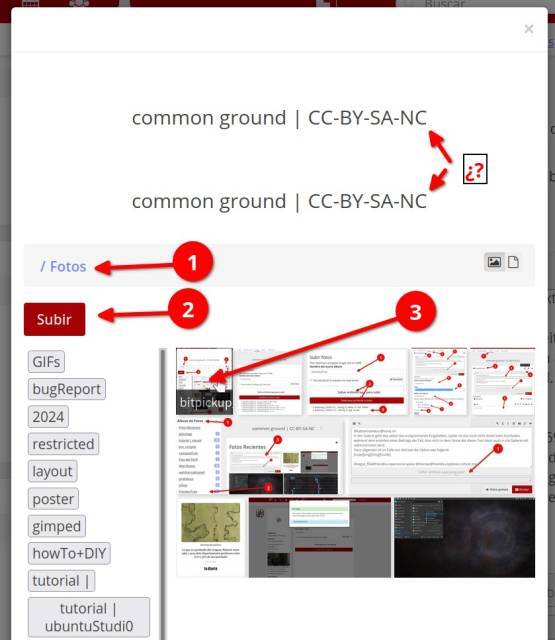

Titel erscheinen als Content Warning (CW) in Mastodon

Hallo zusammen,

ich hab festgestellt dass RSS-Feeds die ich über Friendica abonniert hab (z.B. RND) in Mastodon-Apps immer eingeklappt angezeigt werden. Der Artikel-Titel landet offenbar als Content Warning.

Das gleiche passiert auch bei meinen eigenen Posts wenn ich einen Titel vergebe.

Im Mastodon Netzwerk: Alles okay

Im 2. Mastodon-Konto Friendica: Posts mit Titel sind alle eingeklappt

Ist das bekannt? Gibt es da was geplantes oder einen Workaround?

Danke!

Happy ninth Mastodon Won't Survive Day to all who celebrate!

https://mashable.com/article/mastodon-wont-survive

Six reasons Mastodon won't survive

The hot new thing in social media has some big problems.Lance Ulanoff (Mashable)

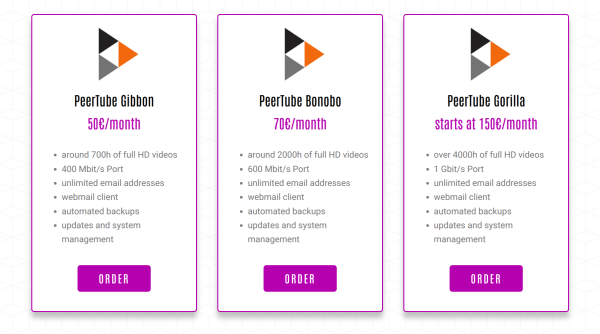

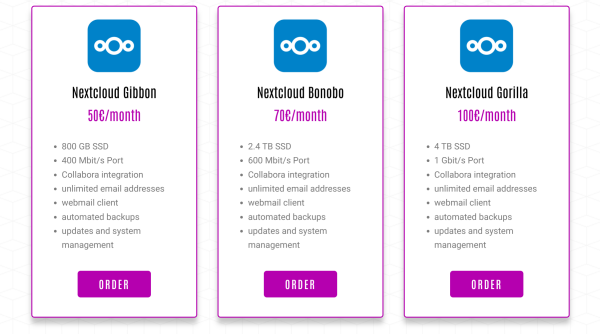

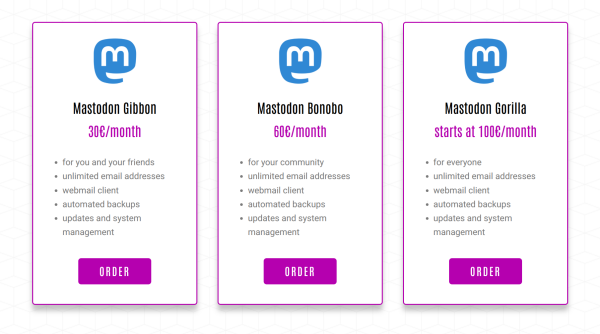

Do you want to have your own #Mastodon instance? How about #Peertube, #Nextcloud, #Matrix or a #Pixelfed instance? You can buy a managed hosting of these services from https://webape.site/

#ManagedHosting means you don't need to have any technical skills or know how to maintain a Linux server, all of that will be done for you. The #Webape project is a small business run by @tio, he also made https://trom.tf and have been providing all of those #FOSS services for free since 2021.

Fedigroups?

I apologize if this is a boring old question. I followed a batch of fedigroups. They do not show up as groups in the Desktop browser GUI.

#mastodon't ?

WILLKOMMEN BEI ‹SEZ FÜR ALLE!› - SEZ für alle!

Deshalb ist unsere Demonstration am 21. Februar entscheidend. Gemeinsam zeigen wir dem Senat und der WBM: Berlin gibt diesen Ort nicht kampflos auf! Denn dasInitiative SEZ (SEZ für alle!)

Hey if anyone is interested we setup instances for Mastodon, Pixelfed, Friendica, Peertube, Nextcloud and more, See - https://webape.site/

We do so for a cost so we can afford to also make the many trade-free services and projects we have. See https://tromsite.com/

If you are interested or may want to help, you can also share this. We setup a separate VPS for each instance and provide updates, support, or custom requests as much as we can, for each instance. Plus an email server for each of them.

#fedi #fediverse #mastodon #friendica #nextcloud #foss #opensource #pixelfed #hosting

As the prophecy foretold.

"The site includes stone rings and a possible mastodon carving, possibly dating back 9,000 years when the lakebed was dry."

https://news.artnet.com/art-world/prehistoric-structure-lake-michigan-stonehenge-2432737

Seit vielen Jahren gibt es die ARD/ZDF-Onlinestudie, die nun Medienstudie heißt. Ich habe mir die Studie 2025 mal im Hinblick auf soziale Medien angeschaut mit besonderem Fokus auf das #Fediverse. Erhoben wird dabei allerdings nur der Dienst #Mastodon. Wir schauen uns näher an, wer es wie nutzt!

Ein Thread. 🧵

Kurz noch vorab zur Studie selbst: die Grundgesamtheit ist die deutschsprechende Wohnbevölkerung ab 14 Jahren in Deutschland, Feldzeit war im Frühjahr 2025, n= 2.512, Dual Frame-Stichprobe (d.h. es wurde per Telefon und Online befragt).

#Kommunikationswissenschaft #Science #Medien #SocialMedia #ARD #ZDF #Empirie #Befragung

Ja, aber die Reichweite?! – Wie wir einst von „Twitter“ ins „Fediverse“ wechselten und es nie bereuten

#Fediverse #Mastodon #Twitter #X #SocialMedia #MicroBlogging #Reichweite #DIDAY #DID #DigitalIndependenceDay #DigitalerUnabhängigkeitstag #DUT

Instance admins: Meta and Twitch have both come out with rules about antisemitism in their latest Terms of Service that are measured but address the concerns of many Jews on the platform.

If I were take those rules and provide you language based on them that would help you implement them on your own sites, would you use it?

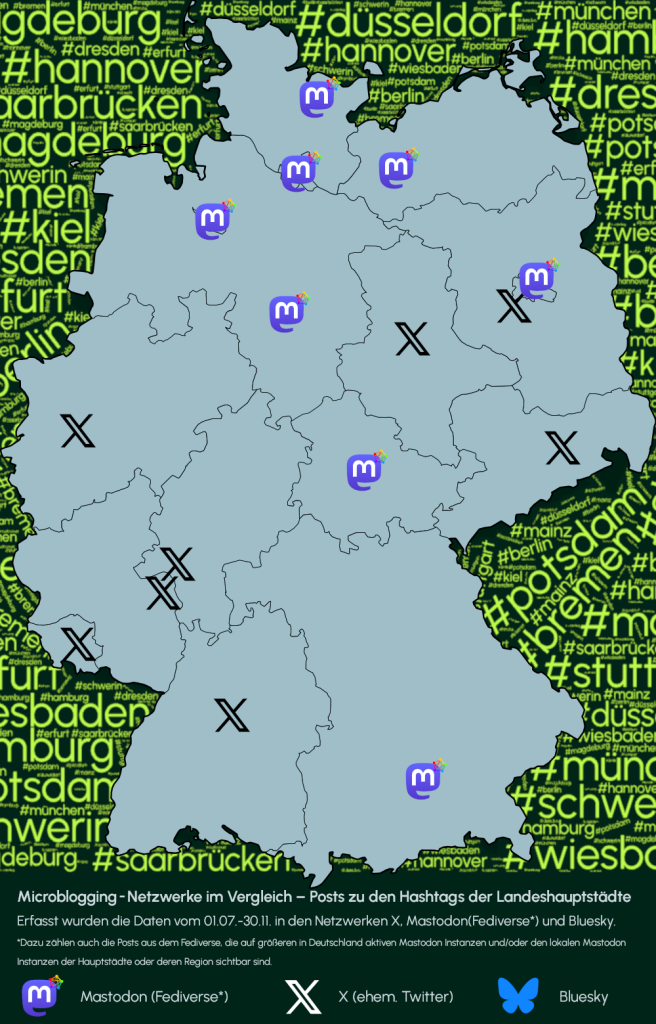

Welches Microblogging-Netzwerk führt bei der Anzahl an Posts unter den Hashtags der Landeshauptstädte?

Hier die Karte dazu! 📍

Erfasst wurden die Daten vom 01.07. bis 30.11.2025 in den Netzwerken X, Mastodon (Fediverse*) und Bluesky. Alle Posts unter #Berlin, #Hamburg etc. wurden jeweils erfasst.

#Berlin #Hamburg #München #Kiel #Bremen #Schwerin #Hannover #Wiesbaden #Mainz #Magdeburg #Saarbrücken #Potsdam #Erfurt #Düsseldorf #Dresden #Stuttgart

Hey #39C3!

Tomorrow, Day 3, 14:00, I will spontaneously be hosting a session in the @cbase Fediverse Assembly on “Lessons from a Public University: Bringing Institutions into the Fediverse” and sharing my experiences from the step-by-step approach at the University of Innsbruck, where we have a Mastodon Instance on our servers, SSO-connected, based on a general strategic approach towards open plattforms.

Happy to see a lot of Fediverse fighters!

https://events.ccc.de/congress/2025/hub/de/event/detail/lessons-from-a-public-university-bringing-institut

![[39c3] Lessons from a Public University: Bringing Institutions into the Fediverse](https://fika.grin.hu/photo/preview/600/554430)

[39c3] Lessons from a Public University: Bringing Institutions into the Fediverse

Was passiert, wenn eine öffentliche Universität beschließt, das Fediverse als Teil eines ganzheitlichen strategischen Ansatzes für die Wissenschaftskommunikation zu nutzen? Ich werde ehrliche Erkenntnisse aus unserer Arbeit an der Universität Inns...39c3

Mastodon character limit is such a weird concept and useless and pointless I can't believe is still there. People who want to say more than a paragraph need to split it into "threads"...insane!

Look:

How the fuck you get to read and interact with a post divided into tens of comments.....

This is silly as fuck people.

If you have no character limit, guess what, you can still make short posts. But not vice-versa.

It is beyond me why this is still a thing in 2025. Artificial limitations.

Man I LOVE Friendica! A proper way to make posts and interact with people. And yet not many are aware of Friendica and how awesome it is.

@Meredith Whittaker you should either move to a Mastodon instance without character limitation or try Friendca. You can even use our node https://social.trom.tf - and that's for anyone else who is bothered by this artificial limitation of Mastodon.

I not only maintain a list of digital service providers that operate outside U.S. jurisdiction, but also a list of Fediverse sites that use non-U.S. domain extensions.

If these sites also use web hosting outside the United States, their websites could be fully outside U.S. legal reach — which is a smart move. 😉

You can see the list here:

https://codeberg.org/Linux-Is-Best/The_Fediverse_Outside_United_States/src/branch/main/Index.md

#Mastodon #Misskey #PixelFed #PeerTube #CherryPick #Fediverse #ActivityPub #DigitalSovereignty

The_Fediverse_Outside_United_States/Index.md at main

The_Fediverse_Outside_United_States - A general list of Fedi sites outside the United States.Codeberg.org

Mastodon ist (stellvertretend für das Fediverse) für den Grimme-Online Award im Publikumspreis nominiert. 🏆

Hier könnt ihr für 3 Projekte voten:

https://w1.grimme-online-award.de/goa/voting/ext_voting.pl

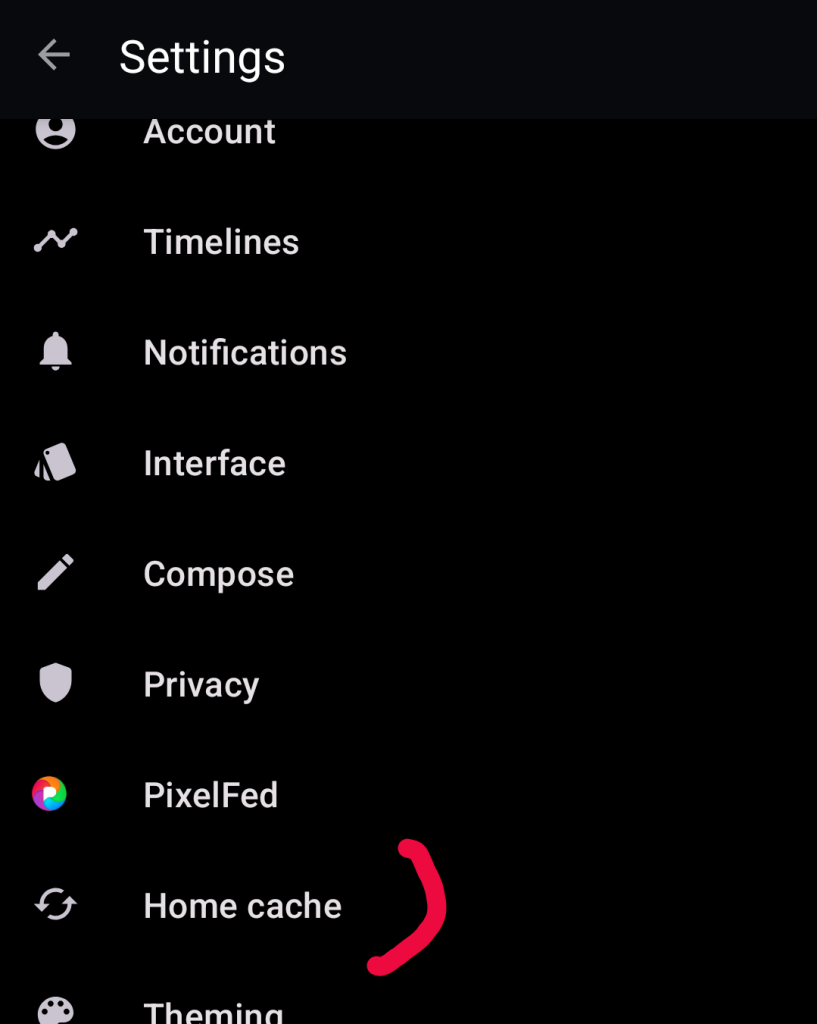

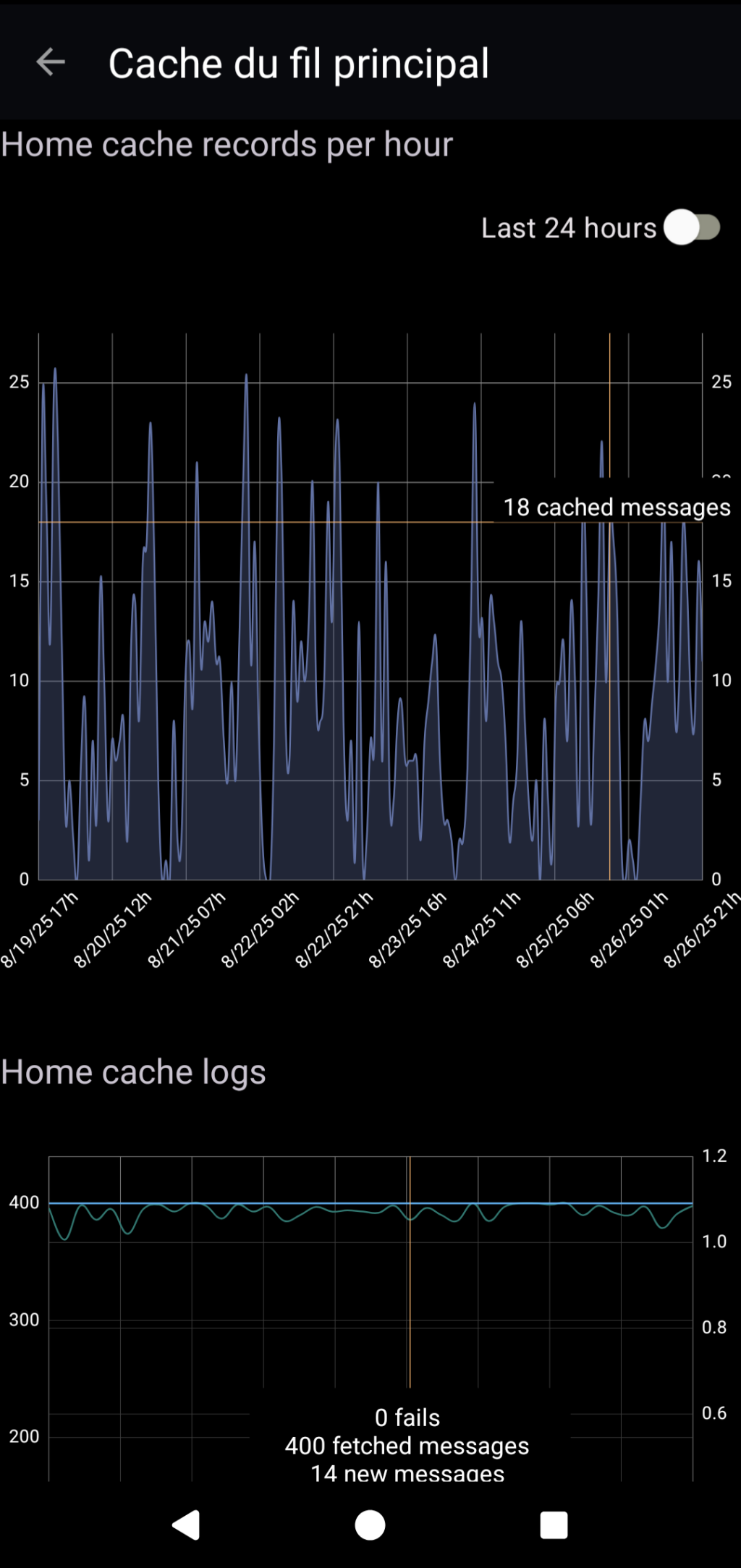

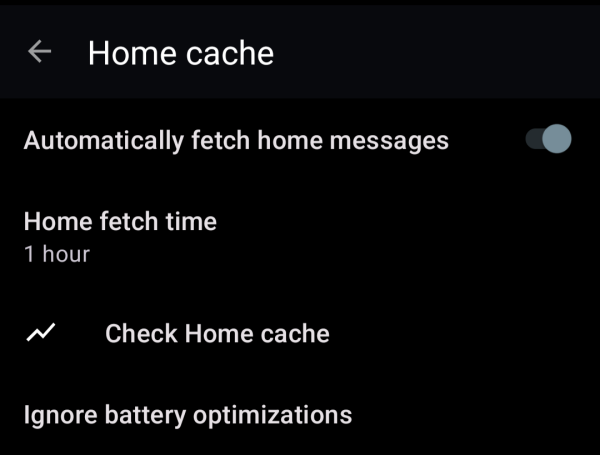

#Fedilab introduced the ability to cache your Home timeline in the background. This is also useful if you have a weak connection, the app will read them later from cache. #FedilabTips

Hi, friends.

I wrote an article on being a Mastodon moderator. I talk about the process, our mental health, the challenges of mutual aid, and more.

It leaves me a little vulnerable, but I think it’s an important story to tell.

If you like it, boosts are welcomed. If you have questions, feel free to reach out.

Okay, here goes…

https://markwrites.io/being-a-mastodon-moderator

#Moderation #Moderators #Mastodon #Fediverse #MentalHealth #IAmAnxiousWritingAboutThis

ALERT for Bluesky Bridge Users 🚨 🦋

If you are using a Bluesky bridge on Mastodon, DO NOT TAG Mastodon accounts in your posts without prior informed consent from this person.

This could end up showing a preview of this person's profile picture and bio on Bluesky without their consent.

Additionally, be careful about how the upcoming Quote Post feature could behave with Bluesky bridges.

Some of us don't want our information shared with commercial platforms like Bluesky, and have not consented to this bridge.

This practice can even endanger some Fediverse users.

If you have chosen to share your

own data with commercial platforms, make sure you leave the same choice to others.

This is important.

#Privacy #Mastodon #Bluesky #BlueskyBridge #Fediverse #Consent

After 2 years I managed to get WebApe to the official list of Mastodon providers https://docs.joinmastodon.org/user/run-your-own/

In order to support the so many free projects we are doing, we are also trying to provide hosting and management for Mastodon, Friendica and the like.

For those interested see https://webape.site/

Basically if you need a Mastodon instance, or Peertube, or Friendica and so forth, you pay a monthly fee and we do everything. No need to worry about updates and all that.

Mastodon get your stuff together and remove any arbitration clause.

https://github.com/mastodon/mastodon/issues/35086

#mastodon #arbitration #law #IP

New Terms of Service IP clause cannot be terminated or revoked, not even by deleting content

Summary Since it first opened, mastodon.social has operated without any sort of explicit IP grant from the users to the service, which is unusual for a social networking service. Today Mastodon ann...mcclure (GitHub)

Just moved from fosstodon to fediscience.

This is the third time I've moved #Mastodon instances! I've never wanted to move, but there have been compelling reasons each time.

I am always impressed at how seamless it has been every time I have moved instances.

I want to give #Mastodon a proper go this time, but it's hard to connect with people when no-one follows you, and hashtags only get you so far. 😞

So, if you like one of the hashtags below, please consider following me, and if you do, I'll follow you back! Thank you. 💗

#retrocomputing #msdos #windows #vintagecomputing #ai #lego #scifi #amiga #linux #doctorwho #drwho #startrek #starwars #space #uk #lgbtq #3dprinting #woodworking #discworld #reading

Am 6. Mai veranstalten wir gemeinsam mit @wikimediaDE und dem @bmuv den online-Workshop

🥁 🥁 Das #Fediverse und seine sozialen Medien.

Mit @nic, @melaniebartos, @MoniKa, @digitalcourage, @cyber4EDU, und @RoedigerRG wird das dezentrale Netzwerk 🕸️ vorgestellt und ganz praktisch in die Nutzung von #Mastodon, #PeerTube und #Pixelfeld eingeführt.

Anmeldung hier

👉 https://www.bmuv.de/veranstaltung/teil-1-der-workshopreihe-sovereign-sustainable-digital-das-fediverse-und-seine-sozialen-medien

Teil 1 der Workshopreihe Sovereign. Sustainable. Digital.: Das Fediverse und seine sozialen Medien- BMUV - Veranstaltung

In diesem interaktiven Online-Workshop wird das Fediverse vorgestellt - ein dezentrales Netzwerk, das community-basierte soziale Plattformen wie Mastodon, PeerTube und Pixelfeld umfasst.Bundesministerium für Umwelt, Naturschutz, nukleare Sicherheit und Verbraucherschutz

#Fellowship in Kooperation mit dem SWR X Lab und Mastodon

Das #SWR X Lab untersucht die Potenziale des #Fediverse für den öffentlich-rechtlichen Journalismus.

In Kooperation mit dem Media Lab Bayern und der Tech-Plattform #Mastodon wurde dazu ein Fellowship entwickelt.

Ziel ist es, innovative Lösungsansätze für sechs definierte Herausforderungen im Umgang mit ActivityPub zu finden.

https://www.media-lab.de/de/angebote/reinvent-social-platforms/#fellowship-in-kooperation-mit-dem-swr-x-lab-und-mastodon

#ÖRRbewegen #SWRX #MediaLabBayern #ActivityPub #ÖRR #Journalismus

Förderung in Kooperation mit dem SWR X LAB und Mastodon

★ 10.000 € Förderung ★ 6 Monate ► Finde neue Lösungsansätze für soziale Plattformen und entwickle mit anderen Expert:innen funktionierende Prototypen.Media Lab Bayern