Search

Items tagged with: matrix

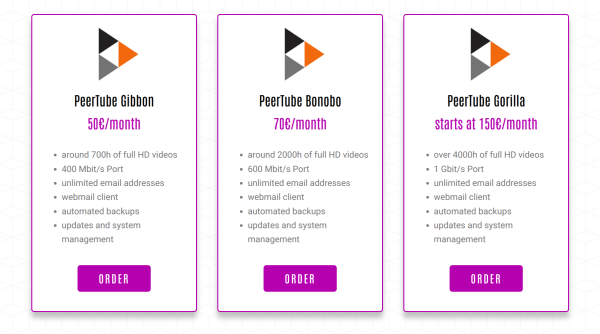

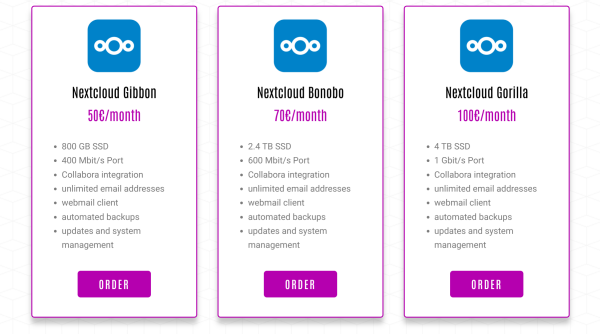

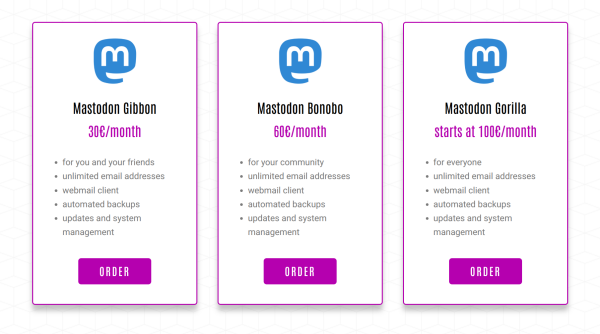

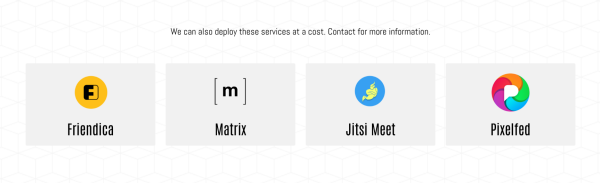

Do you want to have your own #Mastodon instance? How about #Peertube, #Nextcloud, #Matrix or a #Pixelfed instance? You can buy a managed hosting of these services from https://webape.site/

#ManagedHosting means you don't need to have any technical skills or know how to maintain a Linux server, all of that will be done for you. The #Webape project is a small business run by @tio, he also made https://trom.tf and have been providing all of those #FOSS services for free since 2021.

#meme #politics #pedophile #abuse #children #security #system #matrix #trump #government #tax #taxes #finance #money #elite #whitehouse #president #politician #democracy #evil #fail #worldorder #world #maga #qanon #conspiracy #future #view #apocalypse #doomsday

Nächsten Montag ist ab 19.00Uhr wieder Fediversestammtisch in der c-base.

Ich werde einen Vortrag eher Kurzvortrag halten unter dem Titel: "Das Fediverse betrachtet in der Matrix Konvivialer Technik."

Kommt gerne vorbei, lasst euch überraschen und inspirieren. Ich würde mich freuen euch begrüßen zu dürfen.

#fedistammtisch #Vortrag #cbase #Matrix #Konvivial #Technik #Technikfolgenabschätzung

#meme #politics #Minneapolis #Minnesota #protest #ice #resistance #news #propaganda #trump #president #whitehouse #racism #fascism #government ##police #policestate #orwell #dystopia #Epstein #epsteinGate #EpsteinFiles #Finance #money #wealth #capitalism #system #matrix #billionaires #fail #justice #law #usa #america #conspiracy #mainstream #media #problem #ethics

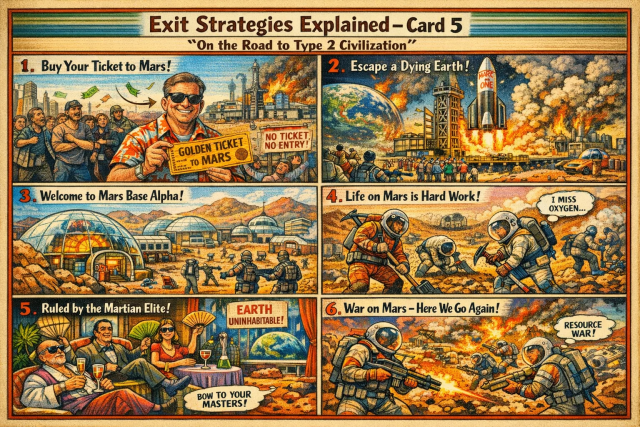

#meme #apocalypse #doomsday #collapse #civilization #future #gameover #exitstrategy #problem #endtime #system #matrix #explanation #politics #resources #fight #society #technology #money #finance #rich #wealth #luxury #economy #future #exploitation #billionaire #power #mars #space #solarsystem

We extended the shutting down of our TROM.tf Matrix chat from 4th of May to 14th of May so that people have time to migrate their accounts. For more info see - https://trom.tf/chat/

Please migrate!

Our chat.trom.tf (Matrix/Synapse) has become very difficult to manage - growing database, not an easy way to prevent bots from registering, or others from abusing our service.

For now we have closed the registrations and only accept them via requests. We have 460 users but we are thinking to perhaps shut down the entire service this year.

Too much work considering anyone can make trade-free accounts with matrix.org or other providers....

We will keep everyone informed about our future plans.

I hope nobody is surprised, but the enshittification of Discord begins (continues?)...

What else could you expect from a company that took almost a billion dollars in funding. Yes that's with a B!

Here's to hoping that Matrix projects finally focus on product experience! *ahem* @element 👀

#Discord #Matrix #Enshittification

Discord heightens ad focus by introducing video ads to mobile apps in June

Discord looks for more ways to make money ahead of expected IPO.Scharon Harding (Ars Technica)

On the two year anniversary of joining #Mastodon I'm super proud to share:

🚀 The Future is Federated - issue no.13 👩🚀

"The #Fediverse has empowered me to take back control from Big Tech. Now I want to help others do the same."

with mentions of @forgeandcraft (who made this awesome Fediverse t-shirt) @phanpy @ivory @Tusky @nimi @Roneyb @davidoclubb

#FOSS #FLOSS #Matrix #TheFutureIsFederated #blog #tech #activism #BigTech #socialmedia #education

The Fediverse has empowered me to take back control from Big Tech. Now I want to help others do the same.

The Fediverse has helped me regain control from the behavior modification empires of Big Tech. Now I want to help other people do the same.Elena Rossini

- synapse (33%, 1 vote)

- dendrite / rizz-gyatt soytrix 69.420-sigma ( ari.lt ) (0%, 0 votes)

- condu( uw )it (66%, 2 votes)

- openssl s_server (0%, 0 votes)

After two and a half years of rewrite, #Fractal 5 is finally out! Get the #GTK 4 #Rust #Matrix client from https://flathub.org/fr/apps/org.gnome.Fractal and enjoy new features such as #EndToEndEncryption, location sharing, or multi-account with Single-Sign On 🚀

Cześć

Dzisiaj na Naszej Chmurze https://nch.pl na prośbę osób z pokoju #Matrix #NCHpl https://matrix.to/#/#nchpl😛ol.social została zainstalowana aplikacja #Nextcloud News, czyli czytnik i agregator kanałów/feedów #RSS

Jeśli chcieliście przetestować, co to są te całe RSS-y, to jest okazja.

A warto 😀

Nowa wersja News wyszła 13h temu, więc prosimy o zgłoszenia ewentualnych bugów.

Lista klientów na desktopy i telefony, współpracujących z Nextcloud News jest tutaj:

https://nextcloud.github.io/news/clients/

Nowości w wersji 24.0.0 tutaj:

https://github.com/nextcloud/news/releases/tag/24.0.0

Strona aplikacji:

https://apps.nextcloud.com/apps/news

Miłego korzystania ❤️

A co ta wtyczka AP do WP oznacza dla #Fediwersum?

Większość stron blogów, portali, organizacji i urzędów w Polsce działa na #WordPress. Po wdrożeniu i adaptacji pojawi się bardzo wiele nowych profili we własnych domenach, bo #WordPress to taka mała instancja serwera #fediverse.

Wtyczkę jakiś czas przejęła Automattic, wydawca wordpressa i podeszła do tematu poważnie.

Czy to koniec dominacji FB w infosferze PL?

Zobaczymy. Trzymam kciuki 👍

P.S. Uruchamiamy pokój do dyskusji o wdrożeniach, konfiguracji, dobrych praktykach adaptacji #ActivityPub w #Wordpress. Na #Matrix #PolSocial. Zaanonsuję osobno 😀

Strona wtyczki -> https://wordpress.org/plugins/activitypub/#description

ActivityPub

The ActivityPub protocol is a decentralized social networking protocol based upon the ActivityStreams 2.0 data format.WordPress.org

#matrix #xmpp #jabber #chat #integration #compatibility #networked #federation

Have you tried?

@nitrokey

@monocles

@chapril

@mailbox_org

People should definitely worry about this! #AI can be useful but in Discord's case, they might as well be using it to exploit user's #data and violate their #privacy.

If you want a privacy-respecting alternative to Discord with E2EE, use #Matrix with #Element

Discord (@discord)

When it comes to sharing AI experiences with your friends, there's no place like Discord. Today, we’re introducing new AI experiments, including an AI chatbot named Clyde, AutoMod AI, and Conversation Summaries, and launching an AI Incubator.Nitter

Krita painting demo, commented, 50min. I hope to see you there.

[edit] Done!

The replay is already available here:

▶️ https://peertube.linuxrocks.online/w/rs2TQ4Xk7FrTqD3BzPJZxy

#CreativeFreedomSummit #krita #matrix

Creative Freedom Summit 2023

The Creative Freedom Summit hosted by the Fedora Design Team is a virtual event to promote Open Source creative tools, features, and benefits of use.LinuxRocks PeerTube

It will be next Thursday, January 19 at 8:30 am (EST) and it's free. More info: https://creativefreedomsummit.com/

Question: What should I speedpaint that day? **Wrong answers only** 😺

#CreativeFreedomSummit #krita #ArtWithOpensource #LiveStream #Matrix #Peertube

Creative Freedom Summit - Hosted by the Fedora Design Team

The conference dedicated to the features and benefits of Open Source creative tools. Be inspired and learn how you can enjoy more creative freedom!Creative Freedom Summit

I can post a link to a podcast or song on a #FunkWhale server for example, and play it right here without ever having to leave my Gleasonator account - it handled such media better than pretty much any other platform, and even those that do accommodate in site viewing, Soapbox is much much prettier.

When it comes to YouTube, however, a trick i picked up in #Matrix, that also works in Soapbox, is to simply run it through a sanitizer like #Invidious.

Then i can play the video inside Soapbox.

This is handled automagically with #Fedilab if you're posting to a Soapbox Pub server.

https://invidious.fdn.fr/shorts/teK_CFdc7gM&local=true?feature=share

#tallship #FOSS #media #playback

⛵

.

Matt Damon explains how Jack Nicholson CHANGED THE SCRIPT in this scene in The Departed #shorts

Have a short screenplay you wish to turn into a film or get feedback on from Oscar winning screenwriters? Submit it to our shorts competition: https://writers.coverfly.Outstanding Screenplays | Invidious

have problems be aware that with #matrix you'll get a whole new level of them!

have problems be aware that with #matrix you'll get a whole new level of them!

#PerAsperaAdAstra

Also I believe many people would want a "global email database", and it's a question whether it's good to enforce people not to use what they want or need.

Decentralisation isn't necessarily segregation, see #matrix for example, where the view is consistently the same independent of what server you're on, and it's decentralised.

But running a "normal" server (like matrix.grin.hu) is manageable as well. And using matrix as a messaging layer is pretty simple, may be done with 3 lines of bash and curl.

https://webirc.hackint.org/#irc://irc.hackint.org/#cccc

Da wir im #hackint sind, gibt es für alle die mit #matrix unterwegs sind auch die Option über die offiziele Hackint-Bridge mit uns in Kontakt zu treten:

https://matrix.to/#/#cccc:hackint.org

Heute Abend ist auch unser wöchentliche offener Abend, ihr seid herzlich eingeladen vorbei zu kommen!

https://koeln.ccc.de/updates/2022-06-23_Offene_Donnerstage.xml

#ccc #koeln #chaos

Matrix - Decentralised and secure communication

You're invited to talk on Matrix. If you don't already have a client this link will help you pick one, and join the conversation. If you already have one, this link will help you join the conversationmatrix.to

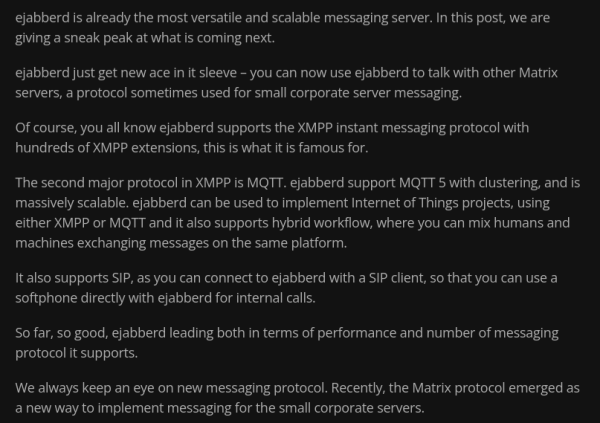

https://www.process-one.net/blog/matrix-protocol-added-to-ejabberd/

Matrix protocol added to ejabberd

Matrix protocol interoperability is being added to the next versions of ejabberd Business Edition, with a focus on scalability.https://www.process-one.net/blog/matrix-protocol-added-to-ejabberd/#author (ProcessOne)

https://youtu.be/F3_Y02A53Zc

#matrix #element #decentralization #encryption #webassembly #nodejs #javascript

Matrix Live S07E24 — NodeJS and WASM Bindings for the Rust SDK

Ivan worked on NodeJS and WASM bindings for the rust SDK. What do these words mean? Let's find out! Le menu du jour is in la description ⏬00:00 Hello01:26 Is...YouTube