Search

Items tagged with: postQuantumCryptography

In 2022, I wrote about my plan to build end-to-end encryption for the Fediverse. The goals were simple:

- Provide secure encryption of message content and media attachments between Fediverse users, as a new type of Direct Message which is encrypted between participants.

- Do not pretend to be a Signal competitor.

The primary concern at the time was “honest but curious” Fediverse instance admins who might snoop on another user’s private conversations.

After I finally was happy with the client-side secret key management piece, I had moved on to figure out how to exchange public keys. And that’s where things got complicated, and work stalled for 2 years.

I wrote a series of blog posts on this complication, what I’m doing about it, and some other cool stuff in the draft specification.

- Towards Federated Key Transparency introduced the Public Key Directory project

- Federated Key Transparency Project Update talked about some of the trade-offs I made in this design

- Not supporting ECDSA at all, since FIPS 186-5 supports Ed25519

- Adding an account recovery feature, which power users can opt out of, that allows instance admins to help a user recover from losing all their keys

- Building a Key Transparency system that can tolerate GDPR Right To Be Forgotten takedown requests without invalidating history

- Introducing Alacrity to Federated Cryptography discussed how I plan to ensure that independent third-party clients stay up-to-date or lose the ability to decrypt messages

Recently, NIST published the new Federal Information Protection Standards documents for three post-quantum cryptography algorithms:

- FIPS-203 (ML-KEM, formerly known as CRYSTALS-Kyber),

- FIPS-204 (ML-DSA, formerly known as CRYSTALS-Dilithium)

- FIPS-205 (SLH-DSA, formerly known as SPHINCS+)

The race is now on to implement and begin migrating the Internet to use post-quantum KEMs. (Post-quantum signatures are less urgent.) If you’re curious why, this CloudFlare blog post explains the situation quite well.

Since I’m proposing a new protocol and implementation at the dawn of the era of post-quantum cryptography, I’ve decided to migrate the asymmetric primitives used in my proposals towards post-quantum algorithms where it makes sense to do so.

The rest of this blog post is going to talk about technical specifics and the decisions I intend to make in both projects, as well as some other topics I’ve been thinking about related to this work.

Which Algorithms, Where?

I’ll discuss these choices in detail, but for the impatient:

- Public Key Directory

- Still just Ed25519 for now

- End-to-End Encryption

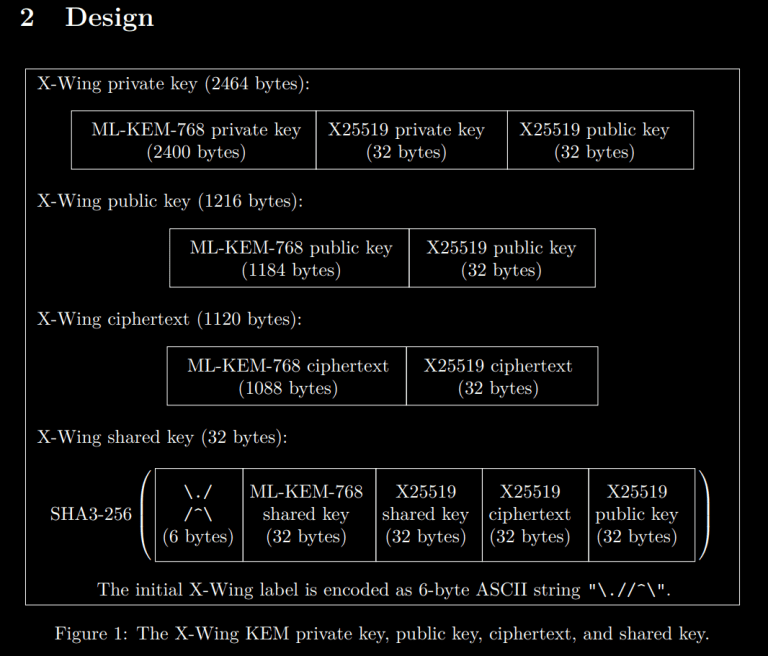

- KEMs: X-Wing (Hybrid X25519 and ML-KEM-768)

- Signatures: Still just Ed25519 for now

Virtually all other uses of cryptography is symmetric-key or keyless (i.e., hash functions), so this isn’t a significant change to the design I have in mind.

Post-Quantum Algorithm Selection Criteria

While I am personally skeptical if we will see a practical cryptography-relevant quantum computer in the next 30 years, due to various engineering challenges and a glacial pace of progress on solving them, post-quantum cryptography is still a damn good idea even if a quantum computer doesn’t emerge.

Post-Quantum Cryptography comes in two flavors:

- Key Encapsulation Mechanisms (KEMs), which I wrote about previously.

- Digital Signature Algorithms (DSAs).

Originally, my proposals were going to use Elliptic Curve Diffie-Hellman (ECDH) in order to establish a symmetric key over an untrusted channel. Unfortunately, ECDH falls apart in the wake of a crypto-relevant quantum computer. ECDH is the component that will be replaced by post-quantum KEMs.

Additionally, my proposals make heavy use of Edwards Curve Digital Signatures (EdDSA) over the edwards25519 elliptic curve group (thus, Ed25519). This could be replaced with a post-quantum DSA (e.g., ML-DSA) and function just the same, albeit with bandwidth and/or performance trade-offs.

But isn’t post-quantum cryptography somewhat new?

Lattice-based cryptography has been around almost as long as elliptic curve cryptography. One of the first designs, NTRU, was developed in 1996.

Meanwhile, ECDSA was published in 1992 by Dr. Scott Vanstone (although it was not made a standard until 1999). Lattice cryptography is pretty well-understood by experts.

However, before the post-quantum cryptography project, there hasn’t been a lot of incentive for attackers to study lattices (unless they wanted to muck with homomorphic encryption).

So, naturally, there is some risk of a cryptanalysis renaissance after the first post-quantum cryptography algorithms are widely deployed to the Internet.

However, this risk is mostly a concern for KEMs, due to the output of a KEM being the key used to encrypt sensitive data. Thus, when selecting KEMs for post-quantum security, I will choose a Hybrid construction.

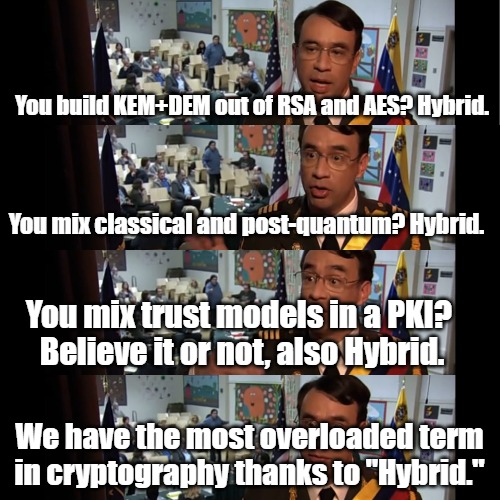

Hybrid what?

We’re not talking folfs, sonny!

Hybrid isn’t just a thing that furries do with their fursonas. It’s also a term that comes up a lot in cryptography.

Unfortunately, it comes up a little too much.

When I say we use Hybrid constructions, what I really mean is we use a post-quantum KEM and a classical KEM (such as HPKE‘s DHKEM), then combine them securely using a KDF.

Post-quantum KEMs

For the post-quantum KEM, we only really have one choice: ML-KEM. But this choice is actually three choices: ML-KEM-512, ML-KEM-768, or ML-KEM-1024.

The security margin on ML-KEM-512 is a little tight, so most cryptographers I’ve talked with recommend ML-KEM-768 instead.

Meanwhile, the NSA wants the US government to use ML-KEM-1024 for everything.

How will you hybridize your post-quantum KEM?

Originally, I was looking to use DHKEM with X25519, as part of the HPKE specification. After switching to post-quantum cryptography, I would need to combine it with ML-KEM-768 in such a way that the whole shebang is secure if either component is secure.

But then, why reinvent the wheel here? X-Wing already does that, and has some nice binding properties that a naive combination might not.

So let’s use X-Wing for our KEM.

Notably, OpenMLS is already doing this in their next release.

Post-quantum signatures

So our KEM choice seems pretty straightforward. What about post-quantum signatures?

Do we even need post-quantum signatures?

Well, the situation here is not nearly as straightforward as KEMs.

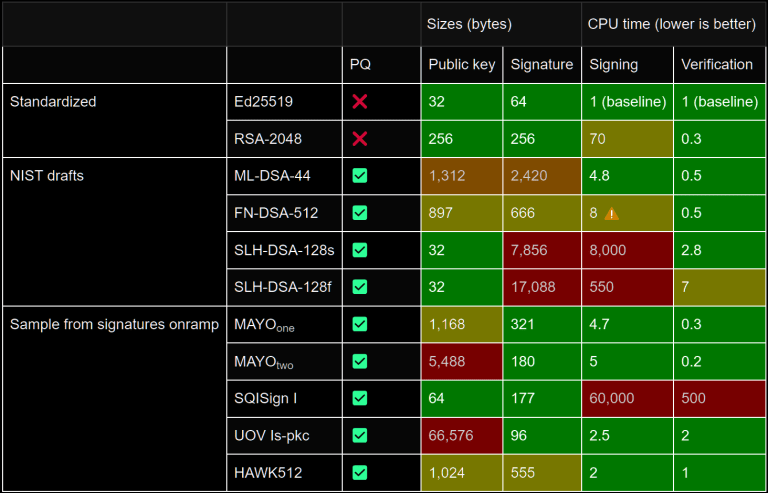

For starters, NIST chose to standardize two post-quantum digital signature algorithms (with a third coming later this year). They are as follows:

- ML-DSA (formerly CRYSTALS-Dilithium), that comes in three flavors:

- ML-DSA-44

- ML-DSA-65

- ML-DSA-87

- SLH-DSA (formerly SPHINCS+), that comes in 24 flavors

- FN-DSA (formerly FALCON), that comes in two flavors but may be excruciating to implement in constant-time (this one isn’t standardized yet)

Since we’re working at the application layer, we’re less worried about a few kilobytes of bandwidth than the networking or X.509 folks are. Relatively speaking, we care about security first, performance second, and message size last.

After all, people ship Electron, React Native, and NextJS apps that load megabytes of JavaScript code to print, “hello world,” and no one bats an eye. A few kilobytes in this context is easily digestible for us.

(As I said, this isn’t true for all layers of the stack. WebPKI in particular feels a lot of pain with large public keys and/or signatures.)

Eliminating post-quantum signature candidates

Performance considerations would eliminate SLH-DSA, which is the most conservative choice. Even with the fastest parameter set (SLH-DSA-128f), this family of algorithms is about 550x slower than Ed25519. (If we prioritize bandwidth, it becomes 8000x slower.)

Between the other two, FN-DSA is a tempting option. Although it’s difficult to implement in constant-time, it offers smaller public key and signature sizes.

However, FN-DSA is not standardized yet, and it’s only known to be safe on specific hardware architectures. (It might be safe on others, but that’s not proven yet.)

In order to allow Fediverse users be secure on a wider range of hardware, this uncertainty would limit our choice of post-quantum signature algorithms to some flavor of ML-DSA–whether stand-alone or in a hybrid construction.

Unlike KEMs, hybrid signature constructions may be problematic in subtle ways that I don’t want to deal with. So if we were to do anything, we would probably choose a pure post-quantum signature algorithm.

Against the Early Adoption of Post-Quantum Signatures

There isn’t an immediate benefit to adopting a post-quantum signature algorithm, as David Adrian explains.

The migration to post-quantum cryptography will be a long and difficult road, which is all the more reason to make sure we learn from past efforts, and take advantage of the fact the risk is not imminent. Specifically, we should avoid:

- Standardizing without real-world experimentation

- Standardizing solutions that match how things work currently, but have significant negative externalities (increased bandwidth usage and latency), instead of designing new things to mitigate the externalities

- Deploying algorithms pre-standardization in ways that can’t be easily rolled back

- Adding algorithms that are pre-standardization or have severe shortcomings to compliance frameworks

We are not in the middle of a post-quantum emergency, and nothing points to a surprise “Q-Day” within the next decade. We have time to do this right, and we have time for an iterative feedback loop between implementors, cryptographers, standards bodies, and policymakers.

The situation may change. It may become clear that quantum computers are coming in the next few years. If that happens, the risk calculus changes and we can try to shove post-quantum cryptography into our existing protocols as quickly as possible. Thankfully, that’s not where we are.

David Adrian, Lack of post-quantum security is not plaintext.

Furthermore, there isn’t currently any commitment from the Sigsum developers to adopt a post-quantum signature scheme in the immediate future. They hard-code Ed25519 for the current iteration of the specification.

The verdict on digital signature algorithms?

Given all of the above, I’m going to opt to simply not adopt post-quantum signatures until a later date.

Version 1 of our design will continue to use Ed25519 despite it not being secure after quantum computers emerge (“Q-Day”).

When the security industry begins to see warning signs of Q-Day being realistically within a decade, we will prioritize migrating to use post-quantum signature algorithms in a new version of our design.

Should something drastic happen that would force us to decide on a post-quantum algorithm today, we would choose ML-DSA-44. However, that’s unlikely for at least several years.

Remember, Store Now, Decrypt Later doesn’t really break signatures the way it would break public-key encryption.

Miscellaneous Technical Matters

Okay, that’s enough about post-quantum for now. I worry that if I keep talking about key encapsulation, some of my regular readers will start a shitty garage band called My KEMical Romance before the end of the year.

Let’s talk about some other technical topics related to end-to-end encryption for the Fediverse!

Federated MLS

MLS was implicitly designed with the idea of having one central service for passing messages around. This makes sense if you’re building a product like Signal, WhatsApp, or Facebook Messenger.

It’s not so great for federated environments where your Delivery Service may be, in fact, more than one service (i.e., the Fediverse). An expired Internet Draft for Federated MLS talks about these challenges.

If we wanted to build atop MLS for group key agreement (like has been suggested before), we’d need to tackle this in a way that doesn’t cede control of MLS epochs to any server that gets compromised.

How to Make MLS Tolerate Federation

First, the Authentication Service component can be replaced by client-side protocols, where public keys are sourced from the Public Key Directory (PKD) services.

That is to say, from the PKD, you can fetch a valid list of Ed25519 public keys for each participant in the group.

When a group is created, the creator’s Ed25519 public key is known. Everyone they invite, their software necessarily has to know their Ed25519 public key in order to invite them.

In order for a group action to be performed, it must be signed by one of the public keys enrolled into the group list. Additionally, some actions may be limited by permissions attached at the time of the invite (or elevated by a more privileged user; which necessitates another group action).

By requiring a valid signature from an existing group member, we remove the capability of the Fediverse instance that’s hosting the discussion group to meddle with it in any way (unless, for some reason, the server is somehow also a participant that was invited).

But therein lies the other change we need to make: In many cases, groups will span multiple Fediverse servers, so groups shouldn’t be dependent on a single instance.

Spreading The Load Across Instances

Put simply, we need a consensus algorithm to determine which instance hosts messages. We could look to Raft as a starting point, but whatever we land on should be fair, fault-tolerant, and deterministic to all participants who can agree on the same symmetric keying material at some point in time.

To that end, I propose using an additional HKDF output from the Group Key Agreement protocol to select a “leader” for all instances involved in the group, weighted by the number of participants on each instance.

Then, every N messages (where N >= 1), a new leader is elected by the same deterministic protocol. This will be performed entirely client-side, and clients will choose N. I will refer to this as a sub-epoch, since it doesn’t coincide with a new MLS epoch.

Since the agreed-upon group key always ratchets forward when a group action occurs (i.e., whenever there’s a new epoch), getting another KDF output to elect the next leader is straightforward.

This isn’t a fully fleshed out idea. Building consensus protocols that can handle real-world operational issues is heavily specialized work and there’s a high risk of falling to the illusion of safety until it’s too late. I will probably need help with this component.

That said, we aren’t building an anonymity network, so the cost of getting a detail wrong isn’t measurable in blood.

We aren’t really concerned with Sybil attacks. Winning the election just means you’re responsible for being a dumb pipe for ciphertext. Client software should trust the instance software as little as possible.

We also probably don’t need to worry about availability too much. Since we’re building atop ActivityPub, when a server goes down, the other instances can hold encrypted messages in the outbox for the host instance to pick up when it’s back online.

If that’s not satisfactory, we could also select both a primary and secondary leader for each epoch (and sub-epoch), to have built-in fail-over when more than one instance is involved in a group conversation.

If messages aren’t being delivered for an unacceptable period of time, client software can forcefully initiate a new leader election by expiring the current MLS epoch (i.e. by rotating their own public key and sending the relevant bundle to all other participants).

Those are just some thoughts. I plan to talk it over with people who have more expertise in the relevant systems.

And, as with the rest of this project, I will write a formal specification for this feature before I write a single line of production code.

Abuse Reporting

I could’ve swore I talked about this already, but I can’t find it in any of my previous ramblings, so here’s a good place as any.

The intent for end-to-end encryption is privacy, not secrecy.

What does this mean exactly? From the opening of Eric Hughes’ A Cypherpunk’s Manifesto:

Privacy is necessary for an open society in the electronic age. Privacy is not secrecy.A private matter is something one doesn’t want the whole world to know, but a secret matter is something one doesn’t want anybody to know.

Privacy is the power to selectively reveal oneself to the world.

Eric Hughes (with whitespace and emphasis added)

Unrelated: This is one reason why I use “secret key” when discussing asymmetric cryptography, rather than “private key”. It also lends towards sk and pk as abbreviations, whereas “private” and “public” both start with the letter P, which is annoying.

With this distinction in mind, abuse reporting is not inherently incompatible with end-to-end encryption or any other privacy technology.

In fact, it’s impossible to create useful social technology without the ability for people to mitigate abuse.

So, content warning: This is going to necessarily discuss some gross topics, albeit not in any significant detail. If you’d rather not read about them at all, feel free to skip this section.

When thinking about the sorts of problems that call for an abuse reporting mechanism, you really need to consider the most extreme cases, such as someone joining group chats to spam unsuspecting users with unsolicited child sexual abuse material (CSAM), flashing imagery designed to trigger seizures, or graphic depictions of violence.

That’s gross and unfortunate, but the reality of the Internet.

However, end-to-end encryption also needs to prioritize privacy over appeasing lazy cops who would rather everyone’s devices include a mandatory little cop that watches all your conversations and snitches on you if you do anything that might be illegal, or against the interest of your government and/or corporate masters. You know the type of cop. They find privacy and encryption to be rather inconvenient. After all, why bother doing their jobs (i.e., actual detective work) when you can just criminalize end-to-end encryption and use dragnet surveillance instead?

Whatever we do, we will need to strike a balance that protects users’ privacy, without any backdoors or privileged access for lazy cops, with community safety.

Thus, the following mechanisms must be in place:

- Groups must have the concept of an “admin” role, who can delete messages on behalf of all users and remove users from the group. (Signal currently doesn’t have this.)

- Users must be able to delete messages on their own device and block users that send abusive content. (The Fediverse already has this sort of mechanism, so we don’t need to be inventive here.)

- Users should have the ability to report individual messages to the instance moderators.

I’m going to focus on item 3, because that’s where the technically and legally thorny issues arise.

Keep in mind, this is just a core-dump of thoughts about this topic, and I’m not committing to anything right now.

Technical Issues With Abuse Reporting

First, the end-to-end encryption must be immune to Invisible Salamanders attacks. If it’s not, go back to the drawing board.

Every instance will need to have a moderator account, who can receive abuse reports from users. This can be a shared account for moderators or a list of moderators maintained by the server.

When an abuse report is sent to the moderation team, what needs to happen is that the encryption keys for those specific messages are re-wrapped and sent to the moderators.

So long as you’re using a forward-secure ratcheting protocol, this doesn’t imply access to the encryption keys for other messages, so the information disclosed is limited to the messages that a participant in the group consents to disclosing. This preserves privacy for the rest of the group chat.

When receiving a message, moderators should not only be able to see the reported message’s contents (in the order that they were sent), but also how many messages were omitted in the transcript, to prevent a type of attack I colloquially refer to as “trolling through omission”. This old meme illustrates the concept nicely:

And this all seems pretty straightforward, right? Let users protect themselves and report abuse in such a way that doesn’t invalidate the privacy of unrelated messages or give unfettered access to the group chats. “Did Captain Obvious write this section?”

But things aren’t so clean when you consider the legal ramifications.

Potential Legal Issues With Abuse Reporting

Suppose Alice, Bob, and Troy start an encrypted group conversation. Alice is the group admin and delete messages or boot people from the chat.

One day, Troy decides to send illegal imagery (e.g., CSAM) to the group chat.

Bob immediately, disgusted, reports it to his instance moderator (Dave) as well as Troy’s instance moderator (Evelyn). Alice then deletes the messages for her and Bob and kicks Troy from the chat.

Here’s where the legal questions come in.

If Dave and Evelyn are able to confirm that Troy did send CSAM to Alice and Bob, did Bob’s act of reporting the material to them count as an act of distribution (i.e., to Dave and/or Evelyn, who would not be able to decrypt the media otherwise)?

If they aren’t able to confirm the reports, does Alice’s erasure count as destruction of evidence (i.e., because they cannot be forwarded to law enforcement)?

Are Bob and Alice legally culpable for possession? What about Dave and Evelyn, whose servers are hosting the (albeit encrypted) material?

It’s not abundantly clear how the law will intersect with technology here, nor what specific technical mechanisms would need to be in place to protect Alice, Bob, Dave, and Evelyn from a particularly malicious user like Troy.

Obviously, I am not a lawyer. I have an understanding with my lawyer friends that I will not try to interpret law or write my own contracts if they don’t roll their own crypto.

That said, I do have some vague ideas for mitigating the risk.

Ideas For Risk Mitigation

To contend with this issue, one thing we could do is separate the abuse reporting feature from the “fetch and decrypt the attached media” feature, so that while instance moderators will be capable of fetching the reported abuse material, it doesn’t happen automatically.

When the “reason” attached to an abuse report signals CSAM in any capacity, the client software used by moderators could also wholesale block the download of said media.

Whether that would be sufficient mitigate the legal matters raised previously, I can’t say.

And there’s still a lot of other legal uncertainty to figure out here.

- Do instance moderators actually have a duty to forward CSAM reports to law enforcement?

- If so, how should abuse forwarding to be implemented?

- How do we train law enforcement personnel to receive and investigate these reports WITHOUT frivolously arresting the wrong people or seizing innocent Fediverse servers?

- How do we ensure instance admins are broadly trained to handle this?

- How do we deal with international law?

- How do we prevent scope creep?

- While there is public interest in minimizing the spread of CSAM, which is basically legally radioactive, I’m not interested in ever building a “snitch on women seeking reproductive health care in a state where abortion is illegal” capability.

- Does Section 230 matter for any of these questions?

We may not know the answers to these questions until the courts make specific decisions that establish relevant case law, or our governments pass legislation that clarifies everyone’s rights and responsibilities for such cases.

Until then, the best answer may simply to do nothing.

That is to say, let admins delete messages for the whole group, let users delete messages they don’t want on their own hardware, and let admins receive abuse reports from their users… but don’t do anything further.

Okay, we should definitely require an explicit separate action to download and decrypt the media attached to a reported message, rather than have it be automatic, but that’s it.

What’s Next?

For the immediate future, I plan on continuing to develop the Federated Public Key Directory component until I’m happy with its design. Then, I will begin developing the reference implementations for both client and server software.

Once that’s in a good state, I will move onto finishing the E2EE specification. Then, I will begin building the client software and relevant server patches for Mastodon, and spinning up a testing instance for folks to play with.

Timeline-wise, I would expect most of this to happen in 2025.

I wish I could promise something sooner, but I’m not fond of moving fast and breaking things, and I do have a full time job unrelated to this project.

Hopefully, by the next time I pen an update for this project, we’ll be closer to launching. (And maybe I’ll have answers to some of the legal concerns surrounding abuse reporting, if we’re lucky.)

https://soatok.blog/2024/09/13/e2ee-for-the-fediverse-update-were-going-post-quantum/

#E2EE #endToEndEncryption #fediverse #FIPS #Mastodon #postQuantumCryptography

Update (2024-06-06): There is an update on this project.As Twitter’s new management continues to nosedive the platform directly into the ground, many people are migrating to what seem like drop-in alternatives; i.e. Cohost and Mastodon. Some are even considering new platforms that none of us have heard of before (one is called “Hive”).

Needless to say, these are somewhat chaotic times.

One topic that has come up several times in the past few days, to the astonishment of many new Mastodon users, is that Direct Messages between users aren’t end-to-end encrypted.

And while that fact makes Mastodon DMs no less safe than Twitter DMs have been this whole time, there is clearly a lot of value and demand in deploying end-to-end encryption for ActivityPub (the protocol that Mastodon and other Fediverse software uses to communicate).

However, given that Melon Husk apparently wants to hurriedly ship end-to-end encryption (E2EE) in Twitter, in some vain attempt to compete with Signal, I took it upon myself to kickstart the E2EE effort for the Fediverse.

https://twitter.com/elonmusk/status/1519469891455234048

So I’d like to share my thoughts about E2EE, how to design such a system from the ground up, and why the direction Twitter is heading looks to be security theater rather than serious cryptographic engineering.

If you’re not interested in those things, but are interested in what I’m proposing for the Fediverse, head on over to the GitHub repository hosting my work-in-progress proposal draft as I continue to develop it.

How to Quickly Build E2EE

If one were feeling particularly cavalier about your E2EE designs, they could just generate then dump public keys through a server they control, pass between users, and have them encrypt client-side. Over and done. Check that box.Every public key would be ephemeral and implicitly trusted, and the threat model would mostly be, “I don’t want to deal with law enforcement data requests.”

Hell, I’ve previously written an incremental blog post to teach developers about E2EE that begins with this sort of design. Encrypt first, ratchet second, manage trust relationships on public keys last.

If you’re catering to a slightly tech-savvy audience, you might throw in SHA256(pk1 + pk2) -> hex2dec() and call it a fingerprint / safety number / “conversation key” and not think further about this problem.

Look, technical users can verify out-of-band that they’re not being machine-in-the-middle attacked by our service.An absolute fool who thinks most people will ever do this

From what I’ve gathered, this appears to be the direction that Twitter is going.https://twitter.com/wongmjane/status/1592831263182028800

Now, if you’re building E2EE into a small hobby app that you developed for fun (say: a World of Warcraft addon for erotic roleplay chat), this is probably good enough.

If you’re building a private messaging feature that is intended to “superset Signal” for hundreds of millions of people, this is woefully inadequate.

https://twitter.com/elonmusk/status/1590426255018848256

Art: LvJ

If this is, indeed, the direction Musk is pushing what’s left of Twitter’s engineering staff, here is a brief list of problems with what they’re doing.

- Twitter Web. How do you access your E2EE DMs after opening Twitter in your web browser on a desktop computer?

- If you can, how do you know twitter.com isn’t including malicious JavaScript to snarf up your secret keys on behalf of law enforcement or a nation state with a poor human rights record?

- If you can, how are secret keys managed across devices?

- If you use a password to derive a secret key, how do you prevent weak, guessable, or reused passwords from weakening the security of the users’ keys?

- If you cannot, how do users decide which is their primary device? What if that device gets lost, stolen, or damaged?

- Authenticity. How do you reason about the person you’re talking with?

- Forward Secrecy. If your secret key is compromised today, can you recover from this situation? How will your conversation participants reason about your new Conversation Key?

- Multi-Party E2EE. If a user wants to have a three-way E2EE DM with the other members of their long-distance polycule, does Twitter enable that?

- How are media files encrypted in a group setting? If you fuck this up, you end up like Threema.

- Is your group key agreement protocol vulnerable to insider attacks?

- Cryptography Implementations.

- What does the KEM look like? If you’re using ECC, which curve? Is a common library being used in all devices?

- How are you deriving keys? Are you just using the result of an elliptic curve (scalar x point) multiplication directly without hashing first?

- Independent Third-Party Review.

- Who is reviewing your protocol designs?

- Who is reviewing your cryptographic primitives?

- Who is reviewing the code that interacts with E2EE?

- Is there even a penetration test before the feature launches?

As more details about Twitter’s approach to E2EE DMs come out, I’m sure the above list will be expanded with even more questions and concerns.

My hunch is that they’ll reuse liblithium (which uses Curve25519 and Gimli) for Twitter DMs, since the only expert I’m aware of in Musk’s employ is the engineer that developed that library for Tesla Motors. Whether they’ll port it to JavaScript or just compile to WebAssembly is hard to say.

How To Safely Build E2EE

You first need to decompose the E2EE problem into five separate but interconnected problems.

- Client-Side Secret Key Management.

- Multi-device support

- Protect the secret key from being pilfered (i.e. by in-browser JavaScript delivered from the server)

- Public Key Infrastructure and Trust Models.

- TOFU (the SSH model)

- X.509 Certificate Authorities

- Certificate/Key/etc. Transparency

- SigStore

- PGP’s Web Of Trust

- Key Agreement.

- While this is important for 1:1 conversations, it gets combinatorially complex when you start supporting group conversations.

- On-the-Wire Encryption.

- Direct Messages

- Media Attachments

- Abuse-resistance (i.e. message franking for abuse reporting)

- The Construction of the Previous Four.

- The vulnerability of most cryptographic protocols exists in the joinery between the pieces, not the pieces themselves. For example, Matrix.

This might not be obvious to someone who isn’t a cryptography engineer, but each of those five problems is still really hard.

To wit: The latest IETF RFC draft for Message Layer Security, which tackles the Key Agreement problem above, clocks in at 137 pages.

Additionally, the order I specified these problems matters; it represents my opinion of which problem is relatively harder than the others.

When Twitter’s CISO, Lea Kissner, resigned, they lost a cryptography expert who was keenly aware of the relative difficulty of the first problem.

https://twitter.com/LeaKissner/status/1592937764684980224

You may also notice the order largely mirrors my previous guide on the subject, in reverse. This is because teaching a subject, you start with the simplest and most familiar component. When you’re solving problems, you generally want the opposite: Solve the hardest problems first, then work towards the easier ones.

This is precisely what I’m doing with my E2EE proposal for the Fediverse.

The Journey of a Thousand Miles Begins With A First Step

Before you write any code, you need specifications.Before you write any specifications, you need a threat model.

Before you write any threat models, you need both a clear mental model of the system you’re working with and how the pieces interact, and a list of security goals you want to achieve.

Less obviously, you need a specific list of non-goals for your design: Properties that you will not prioritize. A lot of security engineering involves trade-offs. For example: elliptic curve choice for digital signatures is largely a trade-off between speed, theoretical security, and real-world implementation security.

If you do not clearly specify your non-goals, they still exist implicitly. However, you may find yourself contradicting them as you change your mind over the course of development.

Being wishy-washy about your security goals is a good way to compromise the security of your overall design.

In my Mastodon E2EE proposal document, I have a section called Design Tenets, which states the priorities used to make trade-off decisions. I chose Usability as the highest priority, because of AviD’s Rule of Usability.

Security at the expense of usability comes at the expense of security.Avi Douglen, Security StackExchange

Underneath Tenets, I wrote Anti-Tenets. These are things I explicitly and emphatically do not want to prioritize. Interoperability with any incumbent designs (OpenPGP, Matrix, etc.) is the most important anti-tenet when it comes to making decisions. If our end-state happens to interop with someone else’s design, cool. I’m not striving for it though!Finally, this section concludes with a more formal list of Security Goals for the whole project.

Art: LvJ

Every component (from the above list of five) in my design will have an additional dedicated Security Goals section and Threat Model. For example: Client-Side Secret Key Management.

You will then need to tackle each component independently. The threat model for secret-key management is probably the trickiest. The actual encryption of plaintext messages and media attachments is comparatively simple.

Finally, once all of the pieces are laid out, you have the monumental (dare I say, mammoth) task of stitching them together into a coherent, meaningful design.

If you did your job well at the outset, and correctly understand the architecture of the distributed system you’re working with, this will mostly be straightforward.

Making Progress

At every step of the way, you do need to stop and ask yourself, “If I was an absolute chaos gremlin, how could I fuck with this piece of my design?” The more pieces your design has, the longer the list of ways to attack it will grow.It’s also helpful to occasionally consider formal methods and security proofs. This can have surprising implications for how you use some algorithms.

You should also be familiar enough with the cryptographic primitives you’re working with before you begin such a journey; because even once you’ve solved the key management story (problems 1, 2 and 3 from the above list of 5), cryptographic expertise is still necessary.

- If you’re feeding data into a hash function, you should also be thinking about domain separation. More information.

- If you’re feeding data into a MAC or signature algorithm, you should also be thinking about canonicalization attacks. More information.

- If you’re encrypting data, you should be thinking about multi-key attacks and confused deputy attacks. Also, the cryptographic doom principle if you’re not using IND-CCA3 algorithms.

- At a higher-level, you should proactively defend against algorithm confusion attacks.

How Do You Measure Success?

It’s tempting to call the project “done” once you’ve completed your specifications and built a prototype, and maybe even published a formal proof of your design, but you should first collect data on every important metric:

- How easy is it to use your solution?

- How hard is it to misuse your solution?

- How easy is it to attack your solution? Which attackers have the highest advantage?

- How stable is your solution?

- How performant is your solution? Are the slow pieces the deliberate result of a trade-off? How do you know the balance was struck corectly?

Where We Stand Today

I’ve only begun writing my proposal, and I don’t expect it to be truly ready for cryptographers or security experts to review until early 2023.However, my clearly specified tenets and anti-tenets were already useful in discussing my proposal on the Fediverse.

@soatok @fasterthanlime Should probably embed the algo used for encryption in the data used for storing the encrypted blob, to support multiples and future changes.@fabienpenso@hachyderm.io proposes in-band protocol negotiation instead of versioned protocols

The main things I wanted to share today are:

- The direction Twitter appears to be heading with their E2EE work, and why I think it’s a flawed approach

- Designing E2EE requires a great deal of time, care, and expertise; getting to market quicker at the expense of a clear and careful design is almost never the right call

Mastodon? ActivityPub? Fediverse? OMGWTFBBQ!

In case anyone is confused about Mastodon vs ActivityPub vs Fediverse lingo:The end goal of my proposal is that I want to be able to send DMs to queer furries that use Mastodon such that only my recipient can read them.

Achieving this end goal almost exclusively requires building for ActivityPub broadly, not Mastodon specifically.

However, I only want to be responsible for delivering this design into the software I use, not for every single possible platform that uses ActivityPub, nor all the programming languages they’re written in.

I am going to be aggressive about preventing scope creep, since I’m doing all this work for free. (I do have a Ko-Fi, but I won’t link to it from here. Send your donations to the people managing the Mastodon instance that hosts your account instead.)

My hope is that the design documents and technical specifications become clear enough that anyone can securely implement end-to-end encryption for the Fediverse–even if special attention needs to be given to the language-specific cryptographic libraries that you end up using.

Art: LvJ

Why Should We Trust You to Design E2EE?

This sort of question comes up inevitably, so I’d like to tackle it preemptively.My answer to every question that begins with, “Why should I trust you” is the same: You shouldn’t.

There are certainly cryptography and cybersecurity experts that you will trust more than me. Ask them for their expert opinions of what I’m designing instead of blanketly trusting someone you don’t know.

I’m not interested in revealing my legal name, or my background with cryptography and computer security. Credentials shouldn’t matter here.

If my design is good, you should be able to trust it because it’s good, not because of who wrote it.

If my design is bad, then you should trust whoever proposes a better design instead. Part of why I’m developing it in the open is so that it may be forked by smarter engineers.

Knowing who I am, or what I’ve worked on before, shouldn’t enter your trust calculus at all. I’m a gay furry that works in the technology industry and this is what I’m proposing. Take it or leave it.

Why Not Simply Rubber-Stamp Matrix Instead?

(This section was added on 2022-11-29.)There’s a temptation, most often found in the sort of person that comments on the /r/privacy subreddit, to ask why even do all of this work in the first place when Matrix already exists?

The answer is simple: I do not trust Megolm, the protocol designed for Matrix.

Megolm has benefited from amateur review for four years. Non-cryptographers will confuse this observation with the proposition that Matrix has benefited from peer review for four years. Those are two different propositions.

In fact, the first time someone with cryptography expertise bothered to look at Matrix for more than a glance, they found critical vulnerabilities in its design. These are the kinds of vulnerabilities that are not easily mitigated, and should be kept in mind when designing a new protocol.

You don’t have to take my word for it. Listen to the Security, Cryptography, Whatever podcast episode if you want cryptographic security experts’ takes on Matrix and these attacks.

From one of the authors of the attack paper:

So they kind of, after we disclosed to them, they shared with us their timeline. It’s not fixed yet. It’s a, it’s a bigger change because they need to change the protocol. But they always said like, Okay, fair enough, they’re gonna change it. And they also kind of announced a few days after kind of the public disclosure based on the public reaction that they should prioritize fixing that. So it seems kind of in the near future, I don’t have the timeline in front of me right now. They’re going to fix that in the sense of like the— because there’s, notions of admins and so on. So like, um, so authenticating such group membership requests is not something that is kind of completely outside of, kind of like the spec. They just kind of need to implement the appropriate authentication and cryptography.Martin Albrecht, SCW podcast

From one of the podcast hosts:I guess we can at the very least tell anyone who’s going forward going to try that, that like, yes indeed. You should have formal models and you should have proofs. And so there’s this, one of the reactions to kind of the kind of attacks that we presented and also to prior previous work where we kind of like broken some cryptographic protocols is then to say like, “Well crypto’s hard”, and “don’t roll your own crypto.” But in a way the thing is like, you know, we need some people to roll their own crypto because that’s how we have crypto. Someone needs to roll it. But we have developed techniques, we have developed formalisms, we have developed methods for making sure it doesn’t have to be hard, it’s not, it’s not a dark art kind of that only kind of a few, a select few can master, but it’s, you know, it’s a science and you can learn it. So, but you need to then indeed employ a cryptographer in kind of like forming, modeling your protocol and whenever you make changes, then, you know, they need to look over this and say like, Yes, my proof still goes through. Um, so like that is how you do this. And then, then true engineering is still hard and it will remain hard and you know, any science is hard, but then at least you have some confidence in what you’re doing. You might still then kind of on the space and say like, you know, the attack surface is too large and I’m not gonna to have an encrypted backup. Right. That’s then the problem of a different hard science, social science. Right. But then just use the techniques that we have, the methods that we have to establish what we need.Thomas Ptacek, SCW podcast

It’s tempting to listen to these experts and say, “OK, you should use libsignal instead.”But libsignal isn’t designed for federation and didn’t prioritize group messaging. The UX for Signal is like an IM application between two parties. It’s a replacement for SMS.

It’s tempting to say, “Okay, but you should use MLS then; never roll your own,” but MLS doesn’t answer the group membership issue that plagued Matrix. It punts on these implementation details.

Even if I use an incumbent protocol that privacy nerds think is good, I’ll still have to stitch it together in a novel manner. There is no getting around this.

Maybe wait until I’ve finished writing the specifications for my proposal before telling me I shouldn’t propose anything.

Credit for art used in header: LvJ, Harubaki

https://soatok.blog/2022/11/22/towards-end-to-end-encryption-for-direct-messages-in-the-fediverse/

There is, at the time of this writing, an ongoing debate in the Crypto Research Forum Group (CFRG) at the IETF about KEM combiners.

One of the participants, Deirdre Connolly, wrote a blog post titled How to Hold KEMs. The subtitle is refreshingly honest: “A living document on how to juggle these damned things.”

Deirdre also co-authored the paper describing a Hybrid KEM called X-Wing, which combines X25519 with ML-KEM-768 (which is the name for a standardized tweak of Kyber, which I happened to opine on a few years ago).

After sharing a link to Deirdre’s blog in a few places, several friendly folk expressed confusion about KEMs in general.

So very briefly, here’s an introduction to Key Encapsulation Mechanisms in general, to serve as a supplementary resource for any future discussion on KEMs.

You shouldn’t need to understand lattices or number theory, or even how to read a mathematics paper, to understand this stuff.

Building Intuition for KEMs

For the moment, let’s suspend most real-world security risks and focus on a simplified, ideal setting.

To begin with, you need some kind of Asymmetric Encryption.

Asymmetric Encryption means, “Encrypt some data with a Public Key, then Decrypt the ciphertext with a Secret Key.” You don’t need to know, or even care, about how it works at the moment.

Your mental model for asymmetric encryption and decryption should look like this:

interface AsymmetricEncryption { encrypt(publicKey: CryptoKey, plaintext: Uint8Array); decrypt(secretKey: CryptoKey, ciphertext: Uint8Array);}

As I’ve written previously, you never want to actually use asymmetric encryption directly.

Using asymmetric encryption safely means using it to exchange a key used for symmetric data encryption, like so:

// Alice sends an encrypted key to BobsymmetricKey = randomBytes(32)sendToBob = AsymmetricEncryption.encrypt( bobPublicKey, symmetricKey)// Bob decrypts the encrypted key from Alicedecrypted = AsymmetricEncryption.decrypt( bobSecretKey, sendToBob)assert(decrypted == symmetricKey) // true

You can then use symmetricKey to encrypt your actual messages and, unless something else goes wrong, only the other party can read them. Hooray!

And, ideally, this is where the story would end. Asymmetric encryption is cool. Don’t look at the scroll bar.

Unfortunately

The real world isn’t as nice as our previous imagination.

We just kind of hand-waved that asymmetric encryption is a thing that happens, without further examination. It turns out, you have to examine further in order to be secure.

The most common asymmetric encryption algorithm deployed on the Internet as of February 2024 is called RSA. It involves Number Theory. You can learn all about it from other articles if you’re curious. I’m only going to describe the essential facts here.

Keep in mind, the primary motivation for KEMs comes from post-quantum cryptography, not RSA.

From Textbook RSA to Real World RSA

RSA is what we call a “trapdoor permutation”: It’s easy to compute encryption (one way), but decrypting is only easy if you have the correct secret key (the other way).

RSA operates on large blocks, related to the size of the public key. For example: 2048-bit RSA public keys operate on 2048-bit messages.

Encrypting with RSA involves exponents. The base of these exponents is your message. The outcome of the exponent operation is reduced, using the modulus operator, by the public key.

(The correct terminology is actually slightly different, but we’re aiming for intuition, not technical precision. Your public key is both the large modulus and exponent used for encryption. Don’t worry about it for the moment.)

If you have a very short message, and a tiny exponent (say, 3), you don’t need the secret key to decrypt it. You can just take the cube-root of the ciphertext and recover the message!

That’s obviously not very good!

To prevent this very trivial weakness, cryptographers proposed standardized padding schemes to ensure that the output of the exponentiation is always larger than the public key. (We say, “it must wrap the modulus”.)

The most common padding mode is called PKCS#1 v1.5 padding. Almost everything that implements RSA uses this padding scheme. It’s also been publicly known to be insecure since 1998.

The other padding mode, which you should be using (if you even use RSA at all) is called OAEP. However, even OAEP isn’t fool proof: If you don’t implement it in constant-time, your application will be vulnerable to a slightly different attack.

This Padding Stinks; Can We Dispense Of It?

It turns out, yes, we can. Safely, too!

We need to change our relationship with our asymmetric encryption primitive.

Instead of encrypting the secret we actually want to use, let’s just encrypt some very large random value.

Then we can use the result with a Key Derivation Function (which you can think of, for the moment, like a hash function) to derive a symmetric encryption key.

class OversimplifiedKEM { function encaps(pk: CryptoKey) { let N = pk.getModulus() let r = randomNumber(1, N-1) let c = AsymmetricEncryption.encrypt(pk, r) return [c, kdf(r)] } function decaps(sk: CryptoKey, c: Uint8Array) { let r2 = AsymmetricEncryption.decrypt(sk, c) return kdf(r2) }}

In the pseudocode above, the actual asymmetric encryption primitive doesn’t involve any sort of padding mode. It’s textbook RSA, or equivalent.

KEMs are generally constructed somewhat like this, but they’re all different in their own special, nuanced ways. Some will look like what I sketched out, others will look subtly different.Understanding that KEMs are a construction on top of asymmetric encryption is critical to understanding them.

It’s just a slight departure from asymmetric encryption as most developers intuit it.

Cool, we’re almost there.

The one thing to keep in mind: While this transition from Asymmetric Encryption (also known as “Public Key Encryption”) to a Key Encapsulation Mechanism is easy to follow, the story isn’t as simple as “it lets you skip padding”. That’s an RSA specific implementation detail for this specific path into KEMs.

The main thing you get out of KEMs is called IND-CCA security, even when the underlying Public Key Encryption mechanism doesn’t offer that property.

IND-CCA security is a formal notion that basically means “protection against an attacker that can alter ciphertexts and study the system’s response, and then learn something useful from that response”.

IND-CCA is short for “indistinguishability under chosen ciphertext attack”. There are several levels of IND-CCA security (1, 2, and 3). Most modern systems aim for IND-CCA2.

Most people reading this don’t have to know or even care what this means; it will not be on the final exam. But cryptographers and their adversaries do care about this.

What Are You Feeding That Thing?

Deirdre’s blog post touched on a bunch of formal security properties for KEMs, which have names like X-BIND-K-PK or X-BIND-CT-PK.

Most of this has to deal with, “What exactly gets hashed in the KEM construction at the KDF step?” (although some properties can hold even without being included; it gets complicated).

For example, from the pseudocode in the previous section, it’s more secure to not only hash r, but also c and pk, and any other relevant transcript data.

class BetterKEM { function encaps(pk: CryptoKey) { let N = pk.getModulus() let r = randomNumber(1, N-1) let c = AsymmetricEncryption.encrypt(pk, r) return [c, kdf(pk, c, r)] } function decaps(sk: CryptoKey, c: Uint8Array) { let pk = sk.getPublickey() let r2 = AsymmetricEncryption.decrypt(sk, c) return kdf(pk, c, r2) }}

In this example, BetterKem is greatly more secure than OversimplifiedKEM, for reasons that have nothing to do with the underlying asymmetric primitive. The thing it does better is commit more of its context into the KDF step, which means that there’s less pliability given to attackers while still targeting the same KDF output.

If you think about KDFs like you do hash functions (which they’re usually built with), changing any of the values in the transcript will trigger the avalanche effect: The resulting calculation, which is not directly transmitted, is practically indistinguishable from random bytes. This is annoying to try to attack–even with collision attack strategies (birthday collision, Pollard’s rho, etc.).

However, if your hash function is very slow (i.e., SHA3-256), you might be worried about the extra computation and energy expenditure, especially if you’re working with larger keys.

Specifically, the size of keys you get from ML-KEM or other lattice-based cryptography.

That’s where X-Wing is actually very clever: It combines X25519 and ML-KEM-768 in such a way that binds the output to both keys without requiring the additional bytes of ML-KEM-768 ciphertext or public key.

However, it’s only secure to use it this way because of the specific properties of ML-KEM and X25519.

Some questions may come to mind:

- Does this additional performance hit actually matter, though?

- Would a general purpose KEM combiner be better than one that’s specially tailored to the primitives it uses?

- Is it secure to simply concatenate the output of multiple asymmetric operations to feed into a single KDF, or should a more specialized KDF be defined for this purpose?

Well, that’s exactly what the CFRG is debating!

Closing Thoughts

KEMs aren’t nearly as arcane or unapproachable as you may suspect. You don’t really even need to know any of the mathematics to understand them, though it certainly does help.

I hope that others find this useful.

Header art by Harubaki and AJ.

https://soatok.blog/2024/02/26/kem-trails-understanding-key-encapsulation-mechanisms/

#asymmetricCryptography #cryptography #KDF #KEM #keyEncapsulationMechanism #postQuantumCryptography #RSA

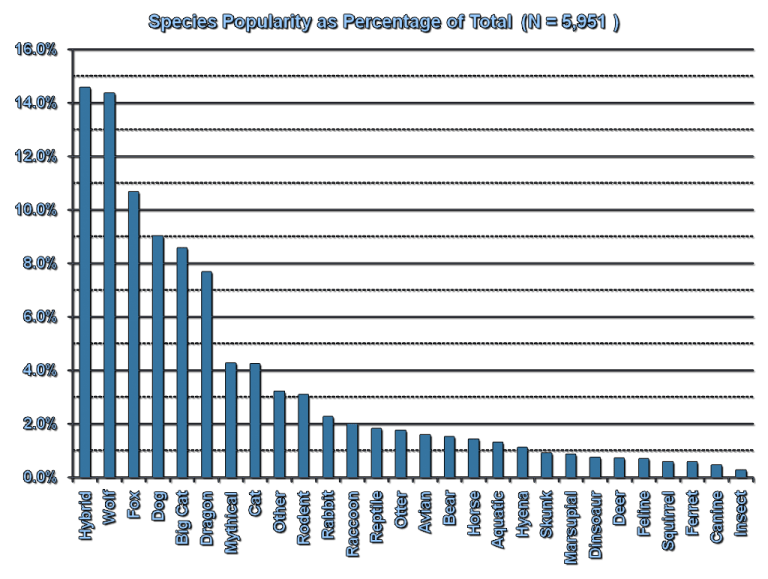

Did you know that, in the Furry Fandom, the most popular choice in species for one’s fursona is actually a hybrid?

Source: FurScience

Of course, we’re not talking about that kind of hybrid today. I just thought it was an amusing coincidence.

Art: Lynx vs Jackalope

Nor are we talking about what comes to mind for engineers accustomed to classical cryptography when you say Hybrid.

(Such engineers typically envision some combination of asymmetric key encapsulation with symmetric encryption; because too many people encrypt with RSA directly and the sane approach is often described as a Hybrid Cryptosystem in the literature.)

Rather, Hybrid Cryptography in today’s context refers to:

Cryptography systems that use a post-quantum cryptography algorithm, combined with one of the algorithms deployed today that aren’t resistant to quantum computers.

If you need to differentiate the two, PQ-Hybrid might be a better designation.Why Hybrid Cryptosystems?

At some point in the future (years or decades from now), humanity may build a practical quantum computer. This will be a complete disaster for all of the cryptography deployed on the Internet today.In response to this distant existential threat, cryptographers have been hard at work designing and attacking algorithms that remain secure even when quantum computers arrive. These algorithms are classified as post-quantum cryptography (mostly to distinguish it from techniques that uses quantum computers to facilitate cryptography rather than attack it, which is “quantum cryptography” and not really worth our time talking about). Post-quantum cryptography is often abbreviated as “PQ Crypto” or “PQC”.

However, a lot of the post-quantum cryptography designs are relatively new or comparatively less studied than their classical (pre-quantum) counterparts. Several of the Round 1 candidates to NIST’s post quantum cryptography project were broken immediately (PDF). Exploit code referenced in PDF duplicated below.:

#!/usr/bin/env python3import binascii, structdef recover_bit(ct, bit): assert bit < len(ct) // 4000 ts = [struct.unpack('BB', ct[i:i+2]) for i in range(4000*bit, 4000*(bit+1), 2)] xs, ys = [a for a, b in ts if b == 1], [a for a, b in ts if b == 2] return sum(xs) / len(xs) >= sum(ys) / len(ys)def decrypt(ct): res = sum(recover_bit(ct, b) << b for b in range(len(ct) // 4000)) return int.to_bytes(res, len(ct) // 4000 // 8, 'little')kat = 0for l in open('KAT_GuessAgain/GuessAgainEncryptKAT_2000.rsp'): if l.startswith('msg = '): # only used for verifying the recovered plaintext. msg = binascii.unhexlify(l[len('msg = '):].strip()) elif l.startswith('c = '): ct = binascii.unhexlify(l[len('c = '):].strip()) print('{}attacking known-answer test #{}'.format('\n' * (kat > 0), kat)) print('correct plaintext: {}'.format(binascii.hexlify(msg).decode())) plain = decrypt(ct) print('recovered plaintext: {} ({})'.format(binascii.hexlify(plain).decode(), plain == msg)) kat += 1

More pertinent to our discussions: Rainbow, which was one of the Round 3 Finalists for post-quantum digital signature algorithms, was discovered in 2020 to be much easier to attack than previously thought. Specifically, for the third round parameters, the attack cost was reduced by a factor of

,

, and

.

That security reduction is just a tad bit more concerning than a Round 1 candidate being totally broken, since NIST had concluded by then that Rainbow was a good signature algorithm until that attack was discovered. Maybe there are similar attacks just waiting to be found?

Given that new cryptography is accompanied by less confidence than incumbent cryptography, hybrid designs are an excellent way to mitigate the risk of attack advancements in post-quantum cryptography:

If the security of your system requires breaking the cryptography used today AND breaking one of the new-fangled designs, you’ll always be at least as secure as the stronger algorithm.

Art: Lynx vs Jackalope

Why Is Hybrid Cryptography Controversial?

Despite the risks of greenfield cryptographic algorithms, the NSA has begun recommending a strictly-PQ approach to cryptography and have explicitly stated that they will not require hybrid designs.Another pushback on hybrid cryptography comes from Uri Blumenthal of MIT’s Lincoln Labs on the IETF CFRG mailing list (the acronym CRQC expands to “Cryptographically-Relevant Quantum Computer”):

Here are the possibilities and their relation to the usefulness of the Hybrid approach.1. CRQC arrived, Classic hold against classic attacks, PQ algorithms hold – Hybrid is useless.

2. CRQC arrived, Classic hold against classic attacks, PQ algorithms fail – Hybrid is useless.

3. CRQC arrived, Classic broken against classic attacks, PQ algorithms hold – Hybrid is useless.

4. CRQC arrived, Classic hold against classic attacks, PQ algorithms broken – Hybrid useless.

5. CRQC doesn’t arrive, Classic hold against classic attacks, PQ algorithms hold – Hybrid is useless.

6. CRQC doesn’t arrive, Classic hold against classic attacks, PQ algorithms broken – Hybrid helps.

7. CRQC doesn’t arrive, Classic broken against classic attacks, PQ algorithms hold – Hybrid is useless.

8. CRQC doesn’t arrive, Classic broken against classic attacks, PQ algorithms broken – Hybrid is useless.

Uri Blumenthal, IETF CFRG mailing list, December 2021 (link)

Why Hybrid Is Actually A Damn Good Idea

Art: Scruff Kerfluff

Uri’s risk analysis is, of course, flawed. And I’m not the first to disagree with him.

First, Uri’s framing sort of implies that each of the 8 possible outputs of these 3 boolean variables are relatively equally likely outcomes.

It’s very tempting to look at this and think, “Wow, that’s a lot of work for something that only helps in 12.5% of possible outcomes!” Uri didn’t explicitly state this assumption, and he might not even believe that, but it is a cognitive trap that emerges in the structure of his argument, so watch your step.

Second, for many candidate algorithms, we’re already in scenario 6 that Uri outlined! It’s not some hypothetical future, it’s the present state of affairs.

To wit: The advances in cryptanalysis on Rainbow don’t totally break it in a practical sense, but they do reduce the security by a devastating margin (which will require significantly larger parameter sets and performance penalties to remedy).

For many post-quantum algorithms, we’re still uncertain about which scenario is most relevant. But since PQ algorithms are being successfully attacked and a quantum computer still hasn’t arrived, and classical algorithms are still holding up fine, it’s very clear that “hybrid helps” is the world we most likely inhabit today, and likely will for many years (until the existence of quantum computers is finally settled).

Finally, even in other scenarios (which are more relevant for other post-quantum algorithms), hybrid doesn’t significantly hurt security. It does carry a minor cost to bandwidth and performance, and it does mean having a larger codebase to review when compared with jettisoning the algorithms we use today, but I’d argue that the existing code is relatively low risk compared to new code.

From what I’ve read, the NSA didn’t make as strong an argument as Uri; they said hybrid would not be required, but didn’t go so far as to attack it.

Hybrid cryptography is a long-term bet that will protect the most users from cryptanalytic advancements, contrasted with strictly-PQ and no-PQ approaches.

Why The Hybrid Controversy Remains Unsettled

Even if we can all agree that hybrid is the way to go, there’s still significant disagreement on exactly how to do it.Hybrid KEMs

There are two schools of thought on hybrid Key Encapsulation Mechanisms (KEMs):

- Wrap the post-quantum KEM in the encrypted channel created by the classical KEM.

- Use both the post-quantum KEM and classical KEM as inputs to a secure KDF, then use a single encrypted channel secured by both.

The first option (layered) has the benefit of making migrations smoother. You can begin with classical cryptography (i.e. ECDHE for TLS ciphersuites), which is what most systems online support today. Then you can do your post-quantum cryptography inside the existing channel to create a post-quantum-secure channel. This also lends toward opportunistic upgrades (which might not be a good idea).

The second option (composite) has the benefit of making the security of your protocol all-or-nothing: You cannot attack the weak now and the strong part later. The session keys you’ll derive require attacking both algorithms in order to get access to the plaintext. Additionally, you only need a single layer. The complexity lies entirely within the handshake, instead of every packet.

Personally, I think composite is a better option for security than layered.

Hybrid Signatures

There are, additionally, two different schools of thought on hybrid digital signature algorithms. However, the difference is more subtle than with KEMs.

- Require separate classical signatures and post-quantum signatures.

- Specify a composite mode that combines the two together and treat it as a distinct algorithm.

To better illustrate what this looks like, I outlined what a composite hybrid digital signature algorithm could look like on the CFRG mailing list:

primary_seed := randombytes_buf(64) // store thised25519_seed := hash_sha512256(PREFIX_CLASSICAL || primary_seed)pq_seed := hash_sha512256(PREFIX_POSTQUANTUM || primary_seed)ed25519_keypair := crypto_sign_seed_keypair(ed25519_seed)pq_keypair := pqcrypto_sign_seed_keypair(pq_seed)

Your composite public key would be your Ed25519 public key, followed by your post-quantum public key. Since Ed25519 public keys are always 32 bytes, this is easy to implement securely.

Every composite signature would be an Ed25519 signature concatenated with the post-quantum signature. Since Ed25519 signatures are always 64 bytes, this leads to a predictable signature size relative to the post-quantum signature.

The main motivation for preferring a composite hybrid signature over a detached hybrid signature is to push the hybridization of cryptography lower in the stack so developers don’t have to think about these details. They just select HYBRIDSIG1 or HYBRIDSIG2 in their ciphersuite configuration, and cryptographers get to decide what that means.

TL;DR

Hybrid designs of post-quantum crypto are good, and I think composite hybrid designs make the most sense for both KEMs and signatures.https://soatok.blog/2022/01/27/the-controversy-surrounding-hybrid-cryptography/

#asymmetricCryptography #classicalCryptography #cryptography #digitalSignatureAlgorithm #hybridCryptography #hybridDesigns #KEM #keyEncapsulationMechanism #NISTPostQuantumCryptographyProject #NISTPQC #postQuantumCryptography

If you follow me on Twitter, you probably already knew that I attended DEFCON 30 in Las Vegas.

If you were there in person, you probably also saw this particular nerd walking around:

The collar reads “Warning: PUNS” in a marquee.

In addition to being shamelessly furry and meeting a lot of really cool hackers (including several readers of this blog!), I attended several village talks (and at least one Sky Talk).

For some of these talks, I attended in fursuit. For others, I opted instead to bring my laptop and take notes. (Doing both things is ill-advised because it’s hard to type with paws, and the risk of laptop damage from excess sweat gives me pause, so it was one or the other.)

To be terse, my DEFCON experience was overwhelmingly positive. However, one talk at the Quantum Village had an extremely sus abstract (which was pointed out to me by Cendyne), so I decided to attend in person and see for myself.

Unfortunately, my intuition as a security engineer did not let me down, as we’ll explore below. But first, some background information.

What is DEFCON, Exactly?

(Feel free to skip this section if you already know.)

DEFCON is a hacking and security conference that takes place every year in Las Vegas, NV.

Unlike the stuffy Industry- and vendor-oriented events, DEFCON is more Community focused. Consequently, DEFCON has an inextricable counterculture element to it, and that makes it unique among security conferences.

(Some of the Security BSides events may also retain the same counterculture attitudes. However, that depends heavily on the attitudes of the local security/hacking scene. Give them a chance if you live near one, or consider starting your own.)

Put another way: DEFCON is to punk rock what USENIX is to classical. You’ll find good practitioners in both genres, and a surprising amount of overlap, but they can be appreciated independently too.

Which is why very few people balked at a group of furries running through Closing Ceremonies.

https://twitter.com/DCFurs/status/1558947697469448192

The weirdness and acceptance of DEFCON is a reflection of the diversity of the infosec community, and that’s a damn good thing.

In addition to DEFCON’s main track, there are a lot of so-called Villages that focus on different aspects of hacking, security, and the community.

For example, the Crypto & Privacy Village.

One of the many aspects of DEFCON is that you find yourself surrounded by people with deep expertise in many different areas (not just technological) who aren’t afraid to call you on any bullshit you spew. (Some of this fearlessness may be due to how much alcohol some people consume at the conference; I generally don’t drink alcohol, especially when fursuiting.)

Vikram Sharma’s Talk at the Quantum Village at DEFCON 30

The M.C. of the Quantum Village introduced Vikram by saying, “You should know who he is,” and saying that he’s deeply involved in policy discussions with government agencies. He was also described as a TED speaker.

https://www.youtube.com/watch?v=SQB9zNWw9MA

I came to the talk intentionally unfamiliar with Vikram’s previous presentations, and with an open mind to the material he was going to share, so to avoid preconceived notions or unconscious bias as much as humanly possible.

The M.C.’s introduction primed me to expect a level of technical competence with cryptography and cryptanalysis on par with, or better than, a typical engineering manager that oversees cryptographers.

Vikram’s talk did not deliver on this built-up expectation.

Vikram Sharma’s Arguments

Vikram’s talk can be loosely distilled into a few important points:

- Cryptography-Relevant Quantum Computers are coming, and we don’t know when, but most experts believe within the next 30 years (at most).

- Although NIST has been working since 2015 on post-quantum cryptography, the advancements in cryptanalysis against Rainbow and SIKE highlight how nascent these algorithms are, and how brittle they may prove to be against mathematical advancements.

- In order to be ready to defend against Quantum Adversaries, we must adopt a mindset of “crypto agility” when engineering our applications.

- For enterprise customers, a Key Management Service that uses Quantum Key Distribution between endpoints in addition to post-quantum cryptography is likely necessary to hedge against advancements to post-quantum cryptanalysis.

The first two points are totally uncontroversial.

The third is interesting, and the fourth is a thinly-veiled sales pitch for Vikram’s company, QuintessenceLabs, rather than a serious technical argument.

Let’s explore the latter two points in more detail.

“Crypto Agility” From an Applied Cryptography Perspective

Protocols that utilize “crypto agility” are not new. SSL/TLS, SSH, and JWT all permit some degree of flexibility in the ciphersuite selection, rather than hard-coding the parameters.

For earlier versions of SSL/TLS, you could encrypt with RC4 with SHA1, and that was totally permitted. Stupid, but permitted. Nowadays, we use AES-GCM or ChaCha20-Poly1305 with TLS 1.3.

The story with SSH is similar, and there’s a lot of overlap in the user experience of configuring SSL/TLS for webservers and configuring OpenSSH. Even PGP supported different ciphers and modes. Crypto agility was the norm for many years.

JSON Web Tokens (JWT) is the perfect example of what happens when you take “crypto agility” too far:

- An attacker can change the

algheader’s value tononeto allow for trivial existential forgery. This surprises users. - An attacker can take an existing token that uses an asymmetric mode (

RS256,ES256, etc.), change thealgto a symmetric mode (i.e.HS256), then use the public key (or a hash of the public key) as a symmetric key, and the verifier would just blindly accept it.

When you maximize “crypto agility”, you introduce dangerous levels of in-band protocol negotiation.

Crypto Agility tends to become a security problem: When an attacker can entirely decide how the target system behaves, they can often bypass your security controls entirely.

Therefore, anyone who speaks in favor of crypto agility should be very careful about what, precisely, they’re advocating for.

Vikram’s talk wasn’t clear about these nuances. At the opening of the Q&A session, I asked the following question:

How do you balance your proposal for crypto agility with the risks of in-band protocol negotiation?

He declined to answer this question directly. Instead, he directed me to email his CTO, John Leiseboer, adding, “He would be better able to speak to that.”

So I returned to my DEFCON hotel room and sent an email to the email address that was displayed on Vikram’s slideshow.

Soatok’s First Email to QuintessenceLabs

This was mostly an attempt to be diplomatic so as to not scare them off from responding:

Hi,I attended your CEO’s talk at the Quantum Village at DEFCON 30. I was very interested in the subject, and Vikram’s delivery of the material was appropriate for the general audience. I did have one question, but he suggested that would be better answered by your CTO.

For a bit of background: I work in applied cryptography.

My question is, “How do you balance your proposal for crypto agility with the risks of in-band protocol negotiation?”

To add color to this question, consider the widely exploited standard, JSON Web Tokens. To change the cryptographic properties of a JWT, you only need to change the “alg” header. This is maximally agile.

Unfortunately, you can specify “none” as an algorithm. This surprises users: https://www.howmanydayssinceajwtalgnonevuln.com/

Additionally, an attacker can take a token signed with an asymmetric key (e.g. RSA), change the alg header to HMAC, and then use [a hash of] the asymmetric public key as if it were a symmetric HMAC key, and achieve trivial existential forgery.

The same concerns, I feel, will crop up when migrating to a hybrid post-quantum design. Cross-protocol interactions of the incorrect secret keys would, from by understanding of Vikram’s talk, be made possible if an attacker can mutate the metadata table.

There is a way out of this mess: Versioned Protocols. Using JWT as an example, a contrary proposal that can support cipher agility but only for ciphers (and constructions of ciphers) that cryptographers have approved is called PASETO. https://paseto.io

If a vulnerability in the current supported protocols (v3, v4) is discovered, the cryptographers behind PASETO will specify new modes (e.g. v5 and v6). Currently the only reason they’re considering any such migration is for hybrid post-quantum signatures (P384 + Dilithium, Ed25519 + Dilithium).

I think the general idea of crypto agility is the right direction for addressing the risk of cryptography relevant quantum computers, but I would caution against recreating the protocol foot-guns made possible by the wrong sort of agility.

Vikram suggested you would be able to speak more to how your designs take these threats into consideration. I’m very interested in this space and would love to learn more about how your designs work at a deeper level.

Thank you for your time,

Soatok

(Typos included in original email)

I didn’t hear back from them for several days, so I hunted down the CTO’s email address directly (thanks, old mailing list discussions!).

He quickly responded with this:

John Leiseboer’s First Response Email

Hi Soatok,My apologies for not responding sooner. I’ve been travelling and in meetings across three time zones. It’s been difficult to find time to absorb your questions and put together a response.

Please give me a few more days to find some time to get back to you.

Regards,

John

I want to pause here and make something very clear: My actual question is the most basic and obvious question any credible security engineer that works with cryptography would ask in response to Vikram’s presentation.

If you were to claim, “Everyone should use crypto agility,” and don’t qualify that statement with nuance or context, then a follow-up question about the risks of crypto agility is inevitable.

If this question somehow doesn’t come up, you’re in the wrong room of the wrong security engineers when you rehearse your pitch.

Why, then, would the speaker not be prepared for such a question? Isn’t he routinely offering similar talks to policy experts for government agencies?

Why, also, would his company’s CTO not be able to readily answer the question without needing additional time to “absorb” this question and put together a response?

It’s not like I asked them to provide a machine-verifiable, formal proof of correctness for their proposals.

I only asked the most basic Cryptography Protocol Design 101 question in response to their assertion that “crypto agility” is something that “companies” should adopt.

The proof, the proof, the proof is invalid!We don’t need no lemma–Proof by contradiction fail!

Fail, contradiction, fail!

With apologies to Rock Master Scott & The Dynamic Three

Was this company’s leadership blind-sided by the most basic question of the field of study they’re hoping to, at least in part, replace?

Did they fail to consider an attacker that alters the metadata associated with their encrypted data in order to convince the “agile” cryptosystems to misbehave?

I will update this post when I have an answer to these very interesting questions.

(Update) QuintessenceLabs Responds

This is one email, but I’m going to add my commentary between paragraph breaks.

Hello again Soatok,I’ve just read your blog where you criticised Vikram’s DEFCON talk. I was disappointed that you couldn’t wait for me to get back to you with the more detailed response that I promised to provide you last night.

Mea culpa. If I don’t press “publish” when the iron is hot, these posts stay in rough draft hell for 12-15 months, and I wanted to get my feedback (and the necessary context for said feedback) to the Quantum Village.